HANABI

.

. . .'. \ /

\ / .'. .' '.' ' -= o =-

-= o =- .' ' / \

/ \ '

'

In this post I will go through how I implemented multi-agent environments using Prime Intellect’s stack as part of their RL Residency. My objective is two-fold:

- To show how multi-agent environments can already be designed using the verifiers library and how training can be done on such environments using both prime-rl and hosted training.

- To propose and discuss abstractions that could be included into verifiers to allow for more ergonomic multi-agent designs in the future.

The main focus is Hanabi, a cooperative card game that is already a well-known environment in multi-agent RL (MARL) research. Subsequently, I extend the proposed abstractions beyond the cooperative setting and explore per-agent rewards, asymmetric roles, and multi-policy training through a series of classic game-theory environments.

Motivation

Many tasks involve some degree of exchange with other agents (human or AI). In traditional (non-LLM-based) RL, multi-agent setups are common and fairly well studied. More broadly, multiagency connects to fundamental research on the nature of intelligence itself — programs like Blaise Agüera y Arcas’ What is Intelligence? initiative frame social interaction, communication, and coordination as central to understanding cognition. Yet in public/open-source LLM-based RL, multi-agent setups are arguably still very under-explored. Having said that, it seems recently the field has shown much more interest in the topic: examples range from Anthropic’s multi-agent research system last year and this year’s Claude Code teams to Kimi’s Agent Swarms, and even stuff like Moltbook and, perhaps less-obviously multi-agent setups like RLMs or speculative forking. While most of these efforts focus on multi-agent orchestration at inference-time, the question of how to train in multi-agent settings is still quite nascent, at least out in the open. In the spirit of Prime Intellect’s inspiring work, I want this project to contribute to the open, shared research around these topics, especially the latter.

The game

I first heard about Hanabi through the work of Jakob Foerster. I believe it was either through The Hanabi Challenge or the BAD for Deep MARL papers. A friend of mine, sci_sic, shortly after bought the game and encouraged me to do so too. It’s a very fun game! And ever since then it’s actually been my go-to whenever we have friends or family over at home.

I searched through my photos for a Hanabi game-night. Here’s a cool one I found of my brother and I playing a few years ago:

In short, Hanabi is a cooperative card game for 2-5 players where everyone holds their cards facing outward—you can see everyone’s cards except your own. Players work together to build five firework piles (one per color) by playing cards in ascending order from 1 to 5. Communication is strictly limited: you can only give clues about a single color or number in another player’s hand, and the team shares a limited pool of clue tokens. A clue token is regained when a 5 is successfully played, and discarding cards also restores tokens but risks losing critical cards. Each card played correctly scores 1 point, a complete firework (all five cards 1→5 of one color) is worth 5 points, and completing all five colors yields the perfect score of 25. The game ends when all fireworks are complete, the deck runs out (one final round each), or the team makes three mistakes by playing unplayable cards.

So Hanabi is simultaneously like team solitaire + blind-man’s bluff insofar as it has an inherent objective of arranging the cards in a specific order (i.e. the fireworks) but in a collaborative way, with the main constraint being the fact that each player has imperfect information about the game state. I’ll expand on the things I find most interesting.

Emergent communication

From my experience, the richness of the game comes from the emergent communication conventions that arise naturally from the interaction between players. The clearest example of this might be when, during play, a participant wants to communicate something that is, so to speak, under-determined. For example, assume the fireworks show:

And you see the other player has the following hand:

Imagine such player knows nothing else about their hand from previous interactions. Evidently, it would be good to communicate to them that they have a B1, which is playable. But hints can only communicate information about numbers or colors. Would it be best to communicate that such a card is a 1? If so, the player may take it as a hint that it’s a playable 1, or perhaps it’s best to communicate it’s blue, and hope that the player interprets the hint as saying it’s a playable blue. Both are valid paths, and the success or failure of effective communication following either could set a standard for subsequent interactions.

The philosophical tenet behind this runs deep and beyond the scope of this post, but it seems to me this is the sort of environment that allows us to explore how communication rules are emergent phenomena arising from social interaction. Wittgenstein argued that meaning cannot exist in isolation but only through shared social use; Hanabi makes this concrete by forcing players to build shared interpretive conventions from scratch.

Theory of mind

Another important aspect that is possible to emphasize in Hanabi is that of theory of mind (ToM), which refers to “the capacity to understand other individuals by ascribing mental states to them”. In Hanabi, ToM is not just useful, it is a structurally necessary in the sense that good performance follows from the each player’s ability to infer what the others are trying to communicate via partial hints and also what and how to communicate to others in order to maximize the probability of them playing well.

You can notice how, near the end of a game, players can start counting remaining cards more easily in order to infer their hand. Communication follows not only from what players can infer directly from counting, but also from trying to count from other player’s perspective. An example:

Imagine it’s near the end of the game, and fireworks show:

The other player has the following hand:

There are some cards in the deck still, and the rest of the cards are in the discard pile, and you can see all blue cards are either in the discard pile, except for that B4 the other player has and 1 B5 and 1 B4, which, from your perspective, could be either in the deck or in your hand.

You have B4, R4, Y2, R1, Y3, which you can’t see of course.

It’s the other player’s turn, but they don’t know anything about their cards just yet. They can count and infer which cards they have but they don’t know which is which. They proceed to give you a hint and tell you which card is the blue one. You reason that although your blue card could be either a 4 or a 5, the fact that they hinted you the color may indicate that it’s a playable card (perhaps enforced by previous successful communications). You could play the card now, though an alternative could be to actually discard such card, with the hope that when you do so, the other player will deduce that you did because you could see the other B4 in their hand.

This example shows that situations requiring nested theory of mind arise naturally in Hanabi. Specifically, it illustrates third-order ToM: (1) you model the other player’s reasoning about their own hand; (2) you model how they model your likely actions given what you can see; and (3) you choose an action (discarding) precisely because you can predict how they will interpret it — reasoning about their reasoning about your reasoning.

Cooperation

One last aspect about Hanabi is the fact that it’s a fully-cooperative / common-payoff game. That is, there’s no player-specific attribution of scores – it’s about getting the highest score together. This matters theoretically because most games are adversarial and cooperative settings are arguably under-explored, and practically, because the shared reward makes it a lot easier to implement as a training environment, given we don’t care about credit assignment across agents. Though, as I’ll show later, moving beyond the common-payoff setting forces exactly this issue to the surface.

Children's Games, Pieter Bruegel the Elder (1560), depicted on the cover of Shoham and Leyton-Brown's book on multi-agent systems

The cooperative aspect of Hanabi has another interesting technical implication: learning to cooperate in tandem is different than learning to cooperate irrespective of who you’re playing with. Playing with the same group of friends makes it easier to always exploit the same conventions, but a good player may also learn to adapt to different group signals, or adapting to less or more experienced players. Self-play allows for the former, and it’s the main focus of this work, but cross-play is an interesting direction for further work1.

Experiments

There’s nooks and crannies to all the experiments I did, but I will try to focus on the most salient things from here on out. One important thing to note, though, is that most of the experiments I did were in 2-player settings, mostly for the practical reason that adding more players increases the number trajectories used to train per-sample, making it harder to inject sample-diversity in the training steps, without a clear benefit on the objectives of this work.

Preliminary results

Before outlining the details about the environment, I would like to show some of the preliminary experiments I had with Hanabi, which illustrate the baseline behavior of models in this environment.

Baseline

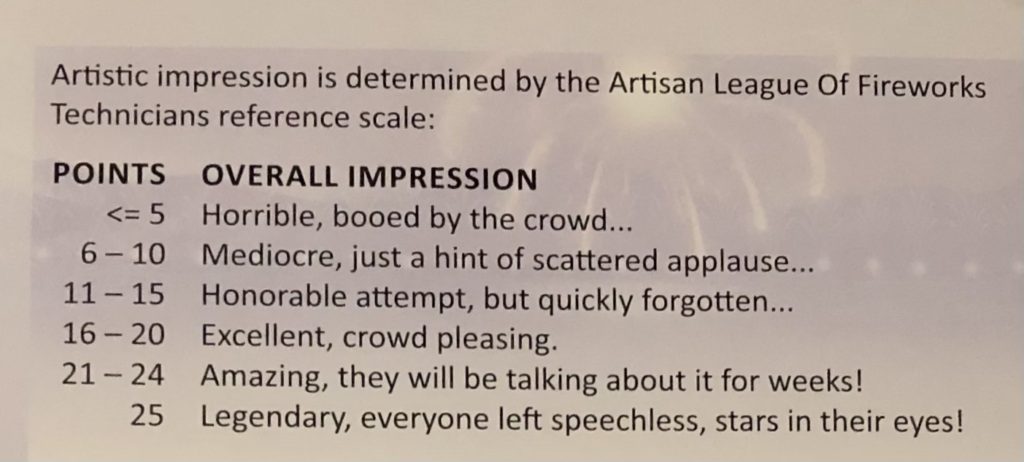

One of the very first things I did after constructing a basic version of the environment was to benchmark some models. To my surprise, models like gpt-4.1-mini already did fairly well in the game, getting mean-scores of ~3.46 but quite a high variance in the score distribution, with some rollouts reaching as high as 18 points, which, by the standards of the game, is considered to be within the “excellent, crowd pleasing” range:

Specifically, the traces showed sound reasoning steps. An example from a rollout:

The game just started. All fireworks are at 0, no cards have been played, and I have no knowledge about my own hand (all unknowns \"??\"). Player 1 has some cards visible: Y5, R3, B1, Y1, G2.

Since my hand is totally unknown, a good first move is to give a hint to player 1 to help them identify playable cards or card value. The earliest playable cards are all 1s. Player 1 has Y1 at position 3. Revealing ranks (\"1\") or colors (\"Y\") will help them know that the Y1 is playable now.

I'll give a hint about rank '1' to player 1. This hint will highlight Y1 and B1 (they have B1 as well). Among those, only Y1 is currently playable (the 1's because fireworks are at 0). Giving a rank hint '1' lets player 1 know they have some 1s to consider playing.

Using 1 info token (we have max 8), but that’s fine.

So: action is \"1H1\" (hint to player 1 about which cards are '1').

Of course, the thought-process here was not perfect. For instance, both Y1 and B1 here were playable, but it did, in the end, reach the most obvious action to start the game with.

RL

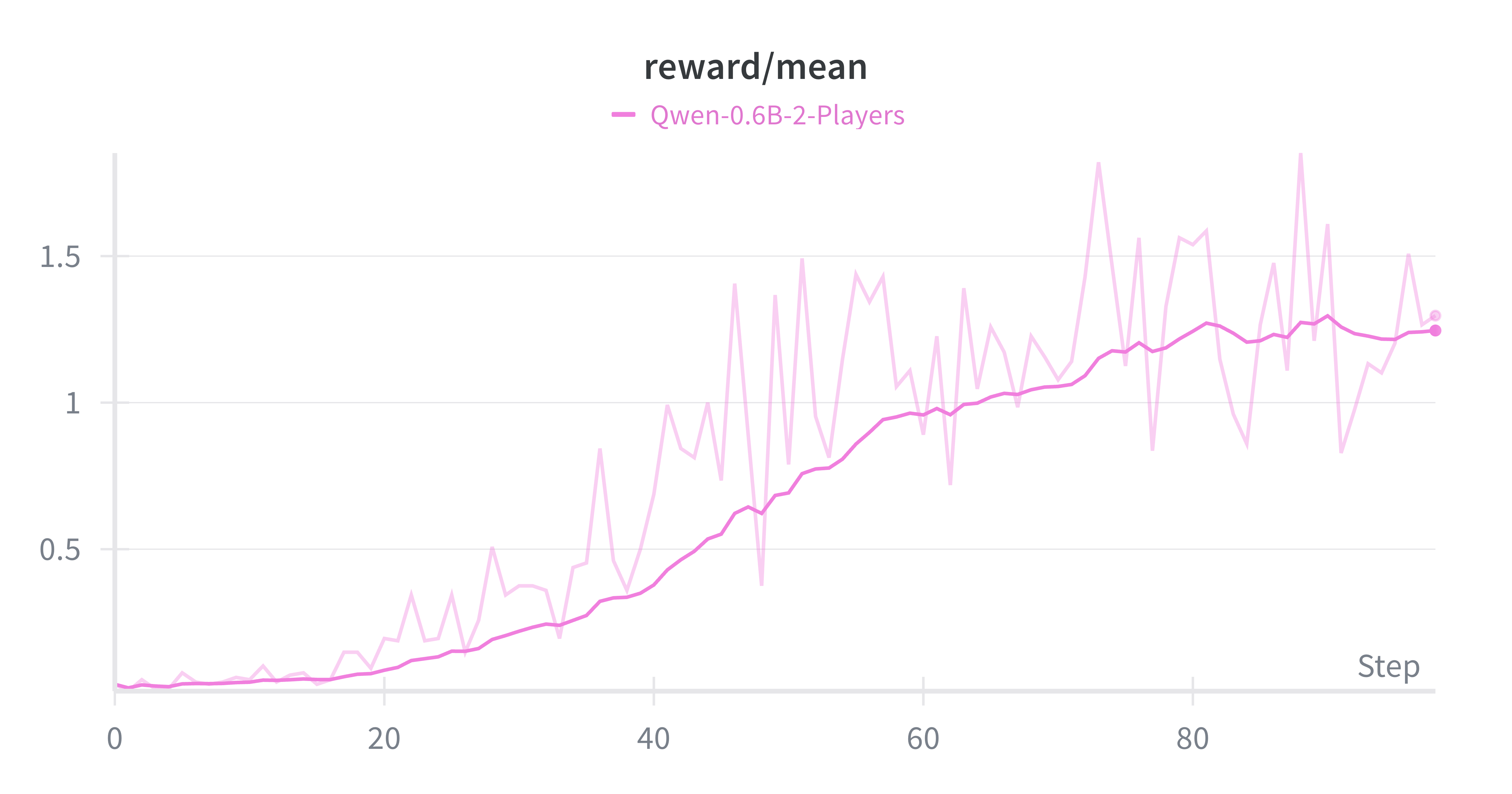

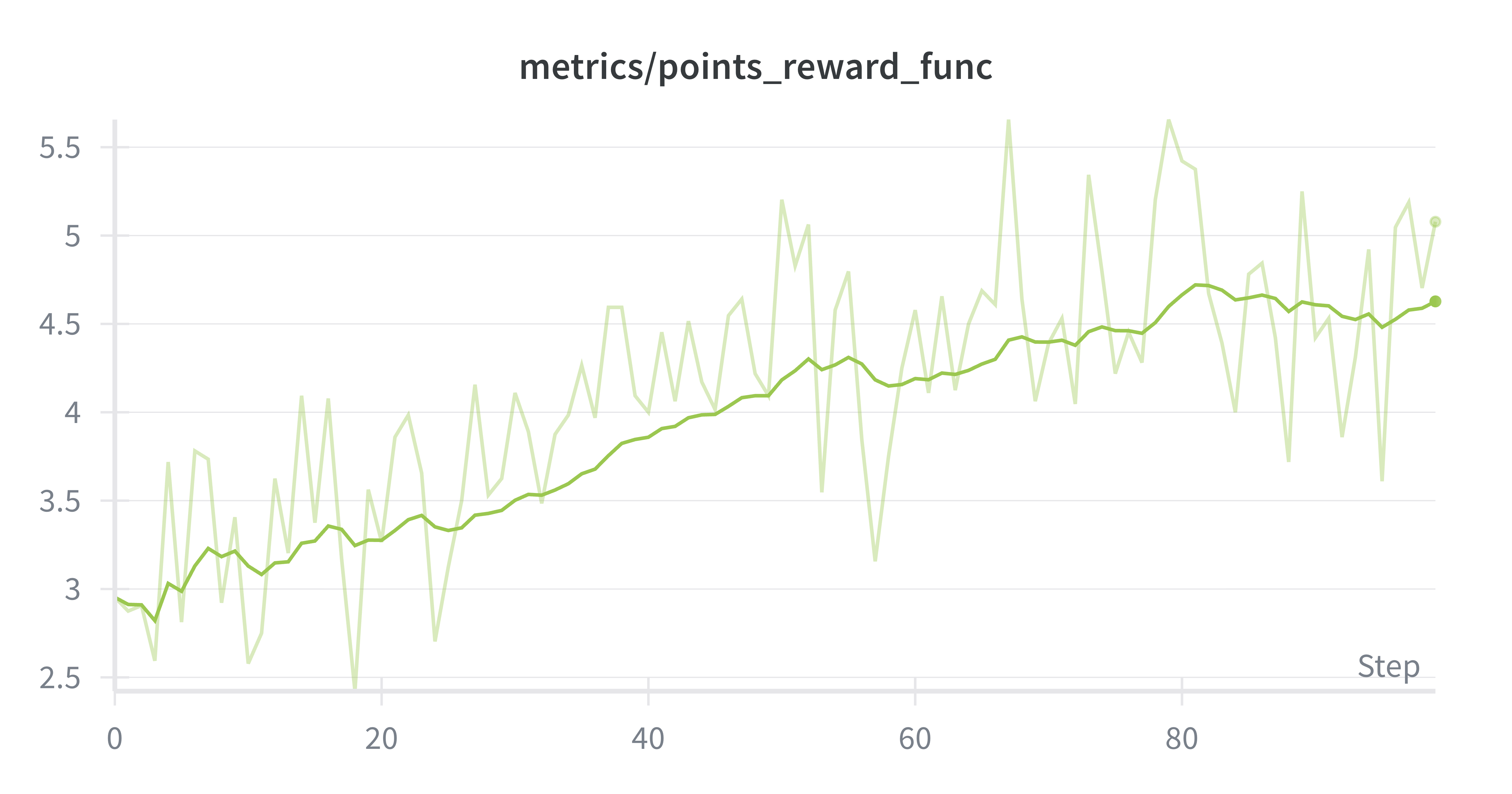

This was enough to start with. A first effort was directed towards finding out how good a Qwen3-0.6B model could get with no previous SFT-warmup at all. RL pushed it at least towards non-zero scores:

The model started with overly extensive and unsound reasoning, failing to output a valid action:

Okay, let's see. I need to figure out the next move to build the fireworks. The game is about playing cards from 1 to 5, so I need to play each card one after another, maybe with some hints.

First, looking at the current state. The player has hands hand <player_0_hand>, which is unknown, but there are 5 positions. So I need to figure out which set of cards I have. The player_0_hand's info is not fully given, but maybe looking at the cards in player_1's hand. Player_1 has G1, Y5, Y3, Y1, G4. Wait, but the firework has R0, Y0, G0, W0, B0. So each color has a firework start.

The first action I should take is to play a card. The first position is 0, which is colored R. But the player knows that the firework starts here, so I need to check if I have that color. However, since I don't know my own cards, but the player_0_hand is unknown. Wait, maybe I can deduce which color is at position 0. The player's own hand is unknown, but the firework starts with those colors. So if I play R0, that's correct.

But I have to think about possible cards. The order of play is from 1 to 5, but maybe the first step is possible. Also, if I play the first card, it might affect the game. Let me try to play R0. Wait, but the player's hand is unknown. Alternatively, maybe I need to choose based on the available info.

Wait, the player_0_hand's format is ?? ?? ?? ?? ??, which are unknown. But perhaps in this game, we have to deduce based on the other player's information. Player_1's hand has G1, Y5, Y3, Y1, G4. Those positions are probably represented as known colors. So if I play R0, that's correct.

Alternatively, maybe I can check if there's a card that's not among the firework colors yet. The current firework has R0, Y0, etc. So the next action would be to play R0. But how? Because the hand is unknown. Maybe I need to look at player_1's hand again. Wait, the firework starts at their

And by the end of a short training run, managed to reach an average of ~1.25 points. But these scores were attained with suboptimal behavior, like the following:

Okay, so the user provided a game state and I need to figure out what the next action is. Let me start by understanding the current state.

The game state shows that the user's hand is unknown, but the player_0_hand is "?? ?? ?? ?? ??". The player 1's hand is "Y5 R2 B1 G1 B4". The firework colors are R0, Y0, G0, W0, B0. The score is 0, and the deck has 40 cards. The life tokens are 3, which means we have three lives left. The info tokens are 8, so there are 8 hints available.

The user (player 0) hasn't played any cards yet. The next step is to play a card. The available actions are P+position, D+position, or player + H + color/rank. Since the next action is the first action, which is playing a card. The position is 0 because it's the first card in the hand. The card isn't specified, so the action would be <action>P0</action>. But I need to make sure that the position is correct. The user input shows that the first card is index 0. So the answer is to play position 0

These results show that the models learnt to climb up to a reward of at least 1 on average by just learning to always “play” a card at ~random. This was obviously not a strategy that would get very far.

SFT

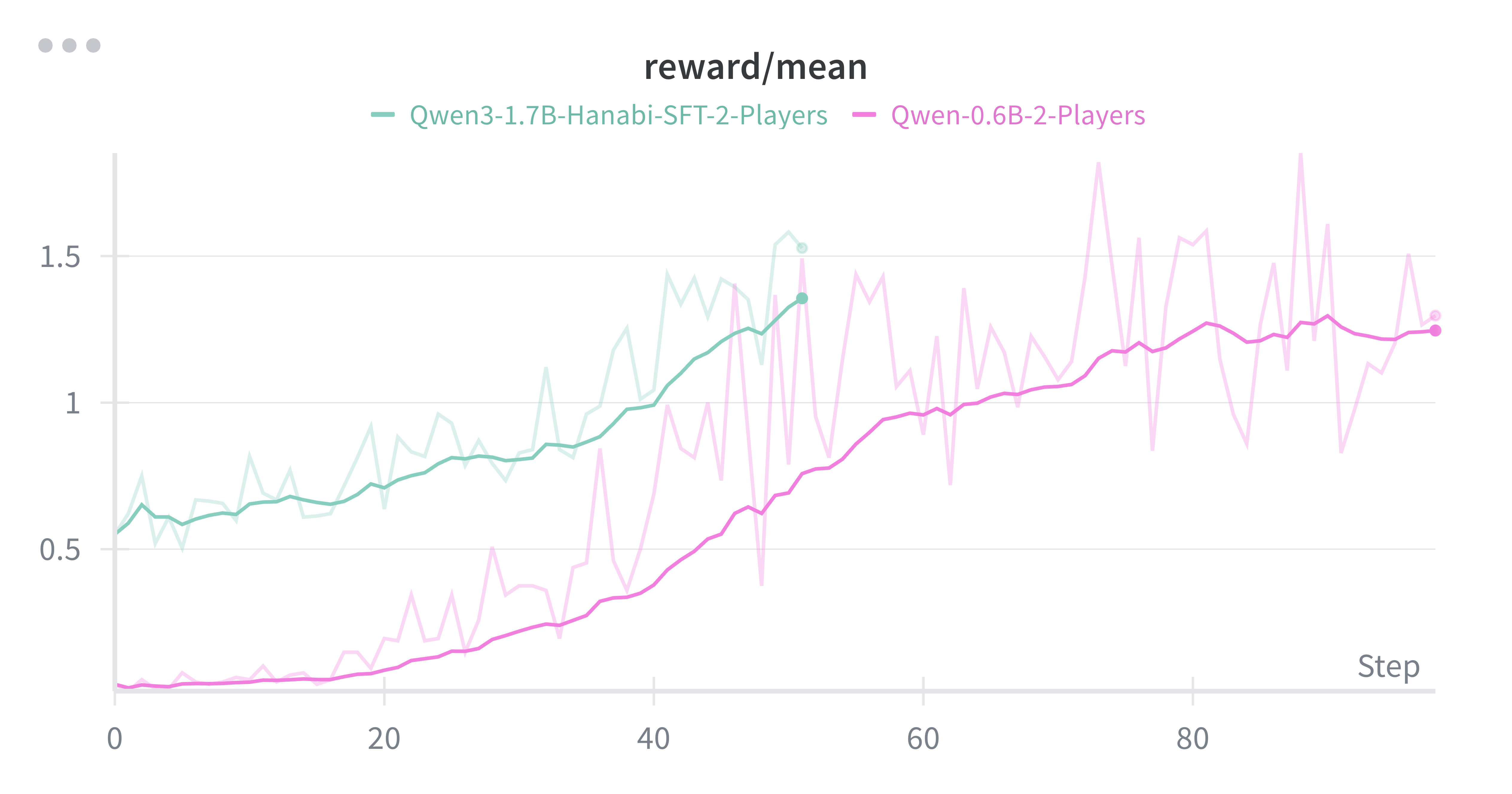

To induce a more adequate behavior prior to the RL run, I created a synthetic dataset, using the gpt-4.1-mini rollouts. This dataset was created with a setting of 50 examples and 2 rollouts per example in 2-player scenarios, resulting in 200 (50*2*2) training traces. This time I tried a slightly bigger Qwen3-1.7B model, which I first fine-tuned (SFT) on this dataset before running RL on top.

The results showed a noticeable improvement over the previous run, probably due to both the change in the base model and the SFT. What mattered more: the reasoning and game-strategy were qualitatively better. For example, this is taken from a rollout at the beginning of the training run which already obtained a score of 2:

At the start of the game, no information tokens have been spent, and our hand is completely unknown (all ??). The other player's hand is visible: W4 W3 G2 B1 B1. None of their cards are playable immediately, as none are 1s. We can't give a hint on color or rank yet, so maybe playing a card that we know is playable now is the best option.

Looking at our own hand, since we have full information tokens (8), we should give a hint to help Player 1 identify cards they can play before the deck runs out. The best hint to give might be rank 1, as all the 1s in Player 1's hand are very valuable and need to be played early.

So our hint could be Player 1 H1, telling them which cards are 1s. This will help them quickly identify and play their 1s earlier in the game, which is beneficial as the deck is full and the first cards to play are those with rank 1.

No immediate risk here as playing a card with rank 1 is the best starting move once the deck is full, and giving a hint will help the other player, which is smart play.

Although it’s clear that the reasoning is not impeccable, it already showed a better game-strategy by opting for an initial hint.

By step 50, we have rollouts with scores as high as 5 and using similar reasoning/strategies at the first turns:

We are at the very start of the game with full knowledge of the other player's hand (player 1) and all info tokens (8). My own cards are completely unknown: "?? ?? ?? ?? ??".

The firework piles are all at zero, so the next cards to play will be the 1s of each color. On player 1's hand, we see Y1, Y5, B4, Y1, W1. There are 3 yellow cards with 1, which means these are the immediate playable cards. Since info tokens are maxed, it is best to give a hint to Player 1 to help them identify their 1s.

Player 1 has Y1, Y1, W1, and Y5, B4. They have two distinct 1s with unknown positions (positions 0, 1, 2 might be part of the 1s, but not sure yet). So, the best initial hint is about the 1s, as they are the next cards to play.

Player 1 has no 1s at 3 or higher with a known rank yet. Since 1s are the only immediate playable cards, it makes sense to give a 1 hint to help them focus on their 1s. This means to Player 1 telling which of their cards are 1s.

We can give a hint like "H1" to Player 1, indicating which cards are 1s. Since info is maxed, no more hint tokens needed.

Therefore, our best initial action is to give Player 1 a hint about 1s to help them play their 1s early.

Standard Hanabi

With the promise of the preliminary results, one first effort to scale-up the training runs was made with the following considerations:

- Full Hanabi games can have 30+ turns. With thinking traces, the sequence length of trajectories can be quite large, at least for small-scale experiments. This was costly during training because the training set-up had to use branching rollouts, which is less efficient than the default interleaving strategy. To make longer runs more feasible the thinking traces were dropped and only actions were required.

- The preliminary experiments were made using XML tags to extract the chosen action from the model’s response. But models like Qwen3 0.6B and 1.7B are non-instruct models and often fail to output a well-formatted response. Instead of using this, the Qwen3-4B-Instruct-2507 was used with tool-calling, which allowed me to bypass format reward signals during training.

Environment

The environment can be found here, in Prime Intellect’s Environment Hub. It has the following structure:

hanabi/

├── config.py # GameConfig dataclass with game constants

├── prompt.py # System prompt template

├── utils.py # Card utilities and game state helpers

├── player.py # Player class with action methods and API calls

└── hanabi.py # HanabiEnv environment, observation generation, and reward

I won’t go into detail in all of it, but I’d like to point at least a few things:

The Player Class

The Player Class represents a player in the game. It’s main method is take_turn, which literally contains the logic to generate the player’s action and execute it. The key logic is contained in the following lines:

# Get response using the verifiers' method (handles logprobs, token extraction)

response = await self.env.get_model_response(

state=state,

prompt=player_messages,

oai_tools=self.env.oai_tools,

)

and:

# Record trajectory with proper token data for training

await self.env.add_model_response(state, prompt_messages, response)

These are responsible for getting a response from the model and record it as a trajectory, which will be used for training.

The rest of the logic in the class in charge of actually executing the action returned by the model (i.e. play a card, discard, or provide a hint to another player).

The HanabiEnv Class

The actual environment is defined in the HanabiEnv class. It extends verifiers’ StatefulToolEnv and contains the logic for Hanabi at the environment level.

The most important method here would be the env_response. In this method, the environment iterates over each player and calls the take_turn method mentioned above.

One thing to note is that, by default, the verifiers library already handles the logic to get and add the model’s response from the default model. In the case of this implementation, such model plays the role of the first player. Then we iterate over the rest of the players, since verifiers does not yet have native logic to handle multi-agent orchestration. What this entails is that the code ends up being slightly awkward, having a blurry line separating the environment from the agent.

Finally, since Hanabi is an environment where players have asymmetric information, the class has a get_observation method, which generates state observations from each player’s perspective. That is, to show to each player the hands of all the other players, but not themselves.

Baseline

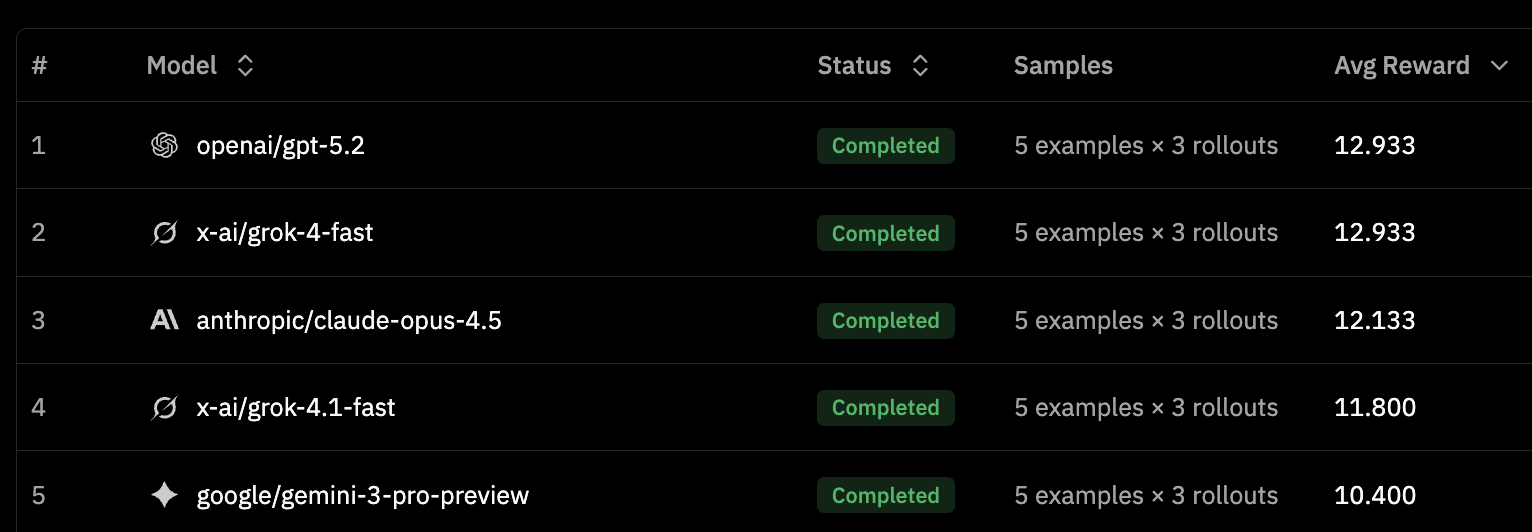

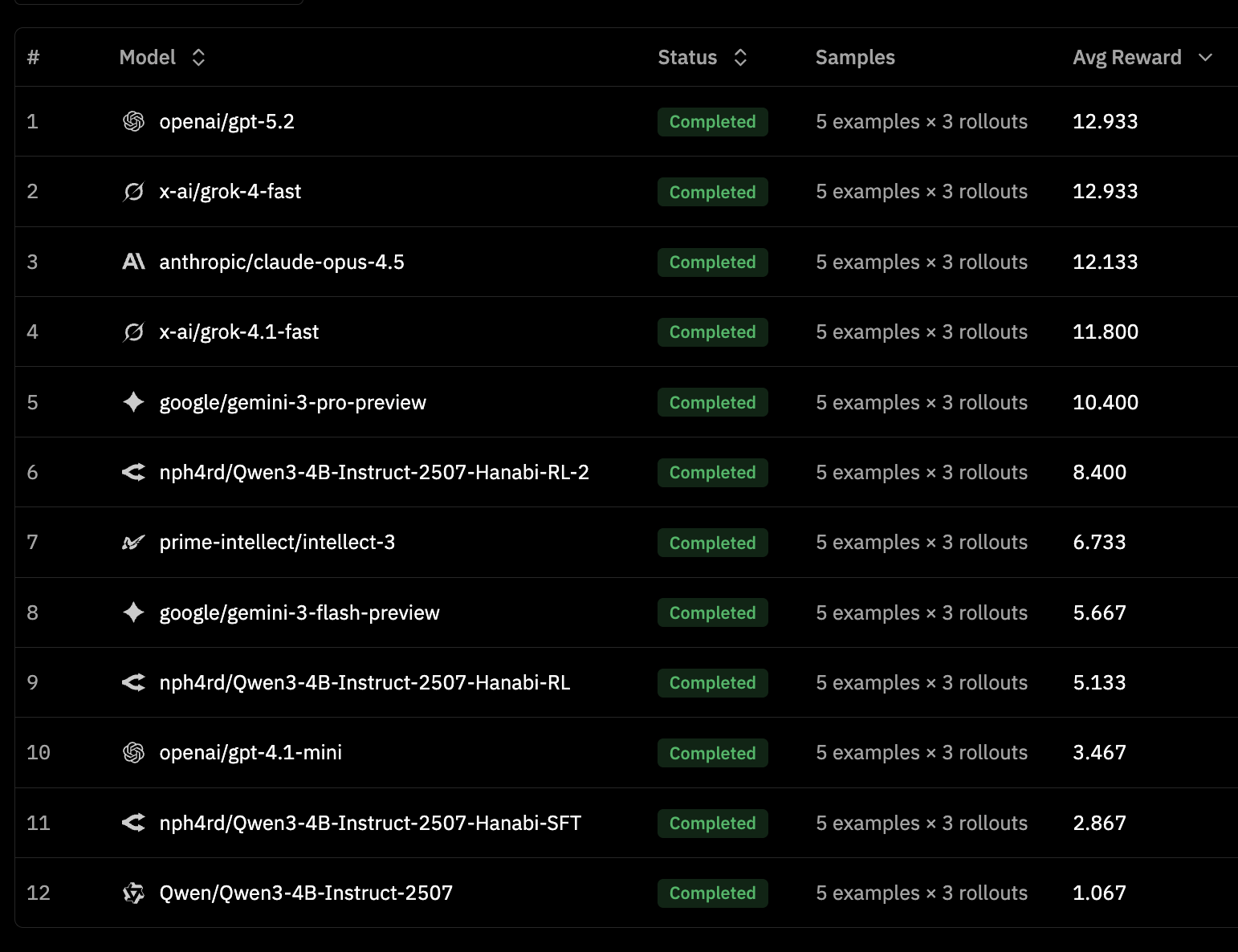

With this implementation, I went for a much more extensive evaluation of proprietary models on the environment. The following table shows the top-5 models from the leaderboard, constructed with Prime Intellect’s super useful eval tool using the default of 5 examples x 3 rollouts:

Other models I evaluated included:

prime-intellect/intellect-3at 6.733google/gemini-3-flash-previewat 5.667openai/gpt-4.1-miniat 3.467 (which I had already mentioned above)

For reference, the base score from Qwen/Qwen3-4B-Instruct-2507 was a mere 1.067. But now onto the good bits…

Synthetic data and SFT warm-up

Using the information from above, I generated a synthetic dataset of rollouts using Grok4-fast, which had the best cost-to-performance ratio from the models I tried. The dataset can be found here – it contains quite diverse rollouts, ranging from scores in the lower 2-5 range to some examples with scores as high as 21 (amazing, by Hanabi’s own standards). It has both rollouts from both player’s perspectives.

Although the Qwen3-4B-Instruct model already fairly well in aligning with the required tool-calling format, I decided to run a short SFT warm-up run to expose the model to examples and have a better baseline from which to jump up.

After the SFT run, the model achieved a score of ~2.9, enough to start RL runs on top of that.

RL

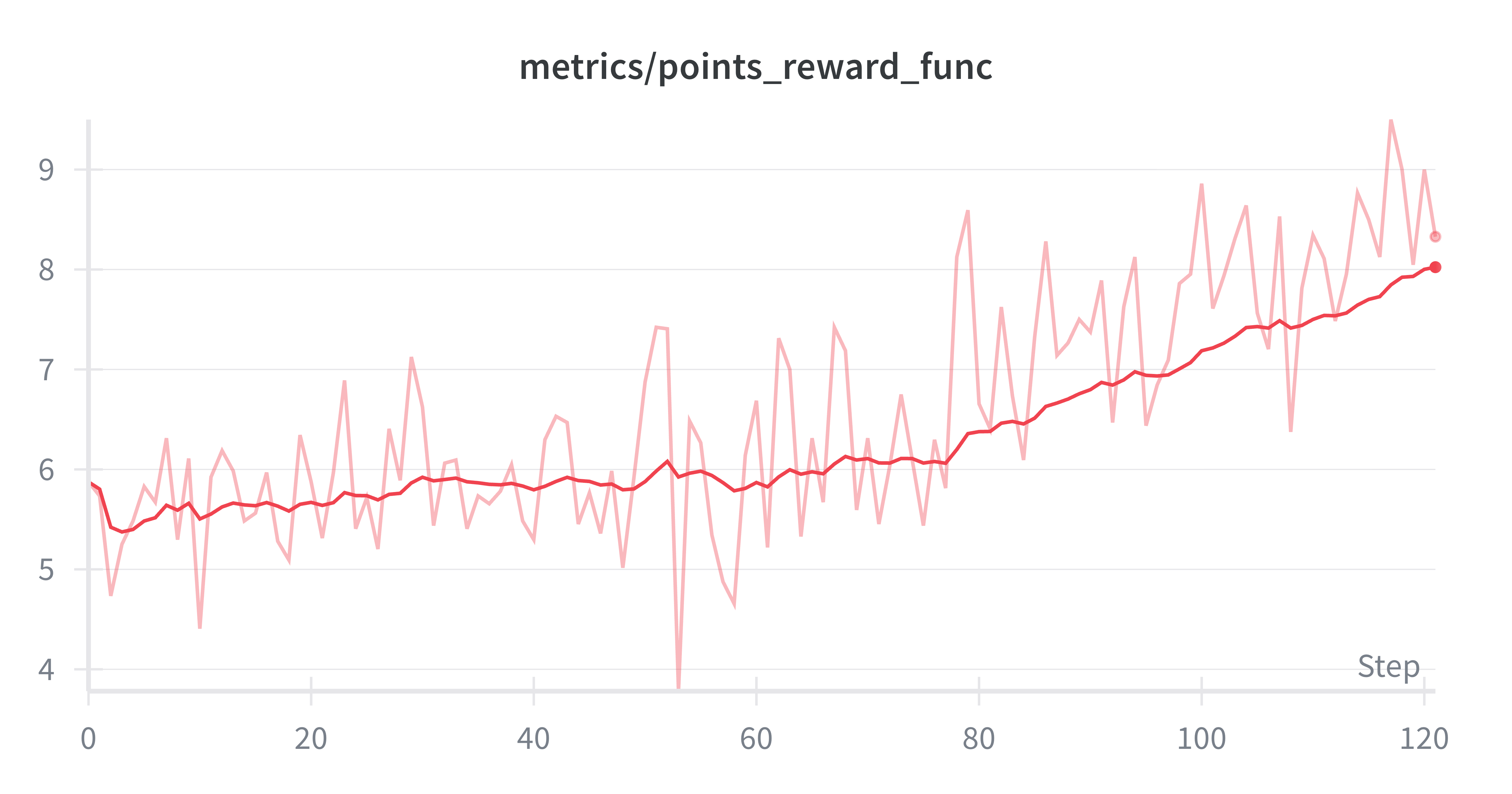

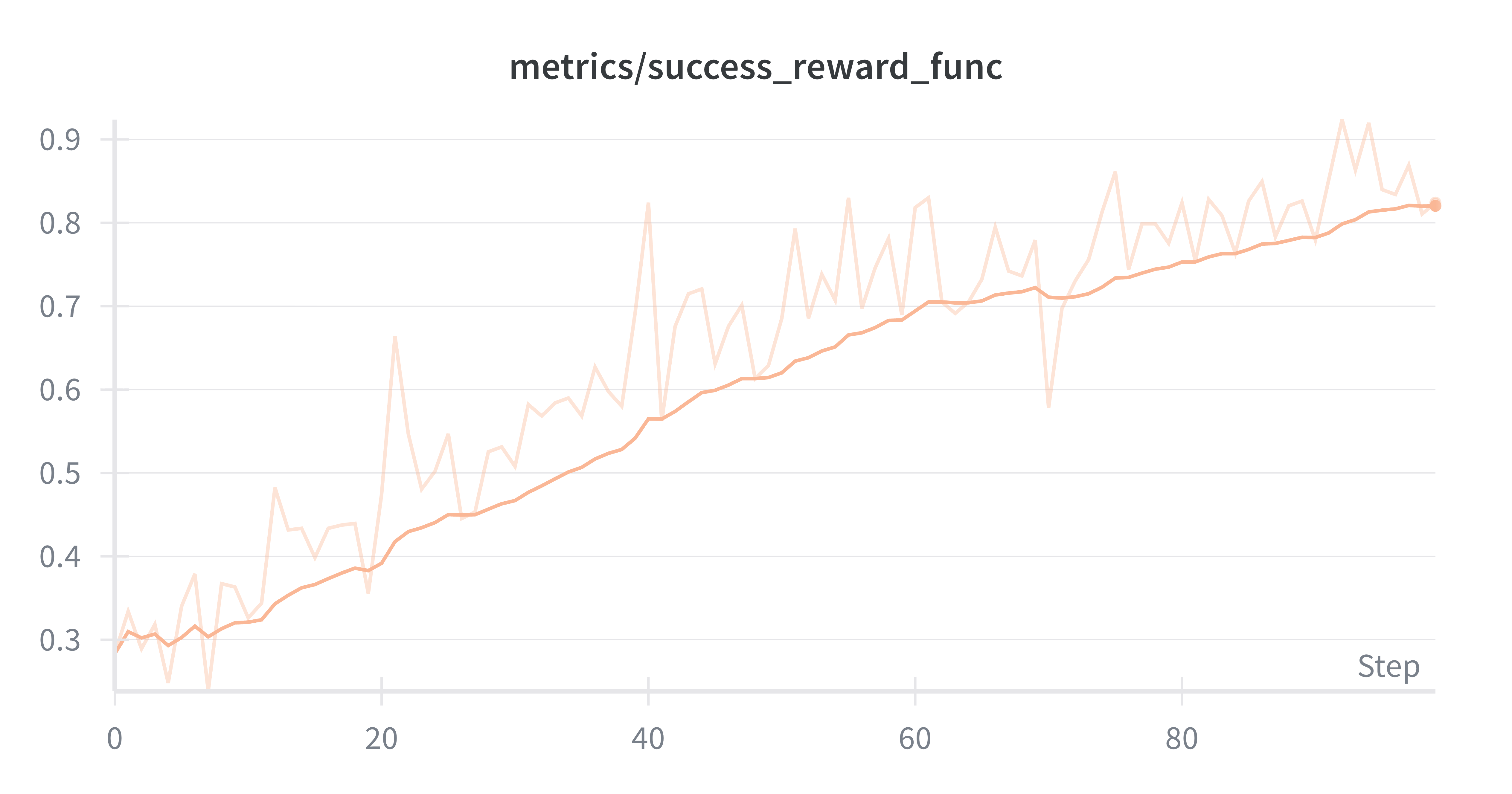

With the SFT’d baseline, I ran an RL experiment split in two. The first, for a 100 steps, which reached a mean score of ~5.1, already above GPT-4.1-mini.

The second run did 120 steps more, and with that the model reached a mean score of ~8.4, above models like Gemini3-Flash and Prime Intellect’s Intellect-3.

After the runs, the leaderboard looked like this:

The final leaderboard can be seen in the environment’s leaderboard tab.

The trained models showed fairly good performance for relatively short runs, but one main challenge surfaced with the experiments: exploding context length. At the start of the first training run the sequence length used was roughly 5k, whereas by the end of the second run, it was reaching levels around 13k. Similarly with the number of turns in the rollouts: an increase from a mean of ~30 turns per rollout to more than double at ~70 turns per rollout by the end of the training.

This challenge was all the more pressing considering that, the runs were made using the less efficient strategy of branching trajectories and that, naturally, having 2 players generating such trajectories meant that the effective sample size seen per training was a lot smaller than recommended configs, leading to noisier reward signal during training. It was clear that scaling-up the RL runs would lead to higher performance, but at the cost of learning from shorter, snappier experiments in smaller, simpler setups.

Before that, another set of experiments was made with Prime Intellect’s new Hosted Training Platform.

Hosted RL

Prime Intellect’s new hosted training platform facilitates training setups by abstracting away most of the infra work you need. It manages multi-tenant LoRA deployments for inference and training, that allows much more efficient and much cheaper training runs, by leveraging shared hardware across other runs.

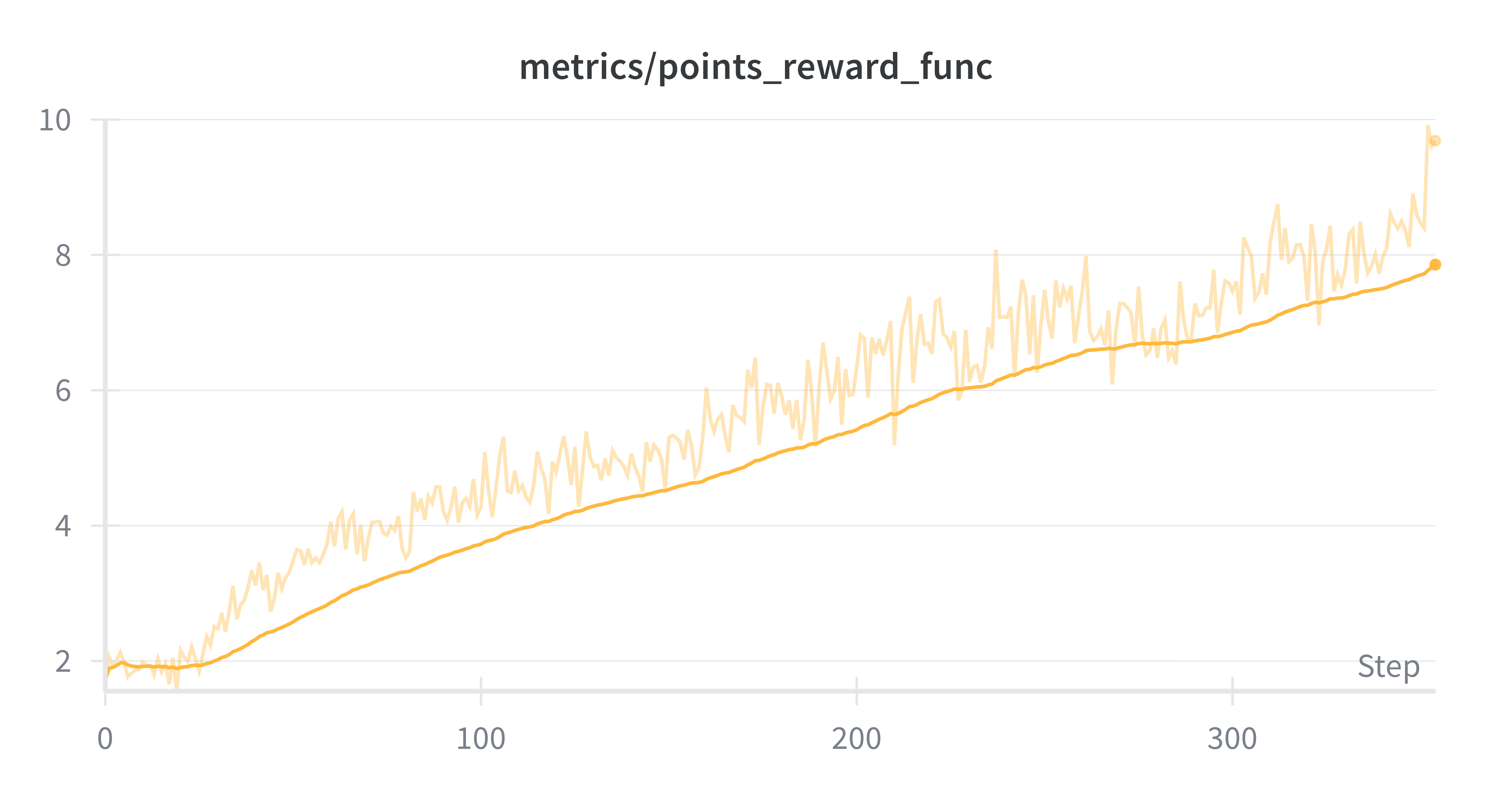

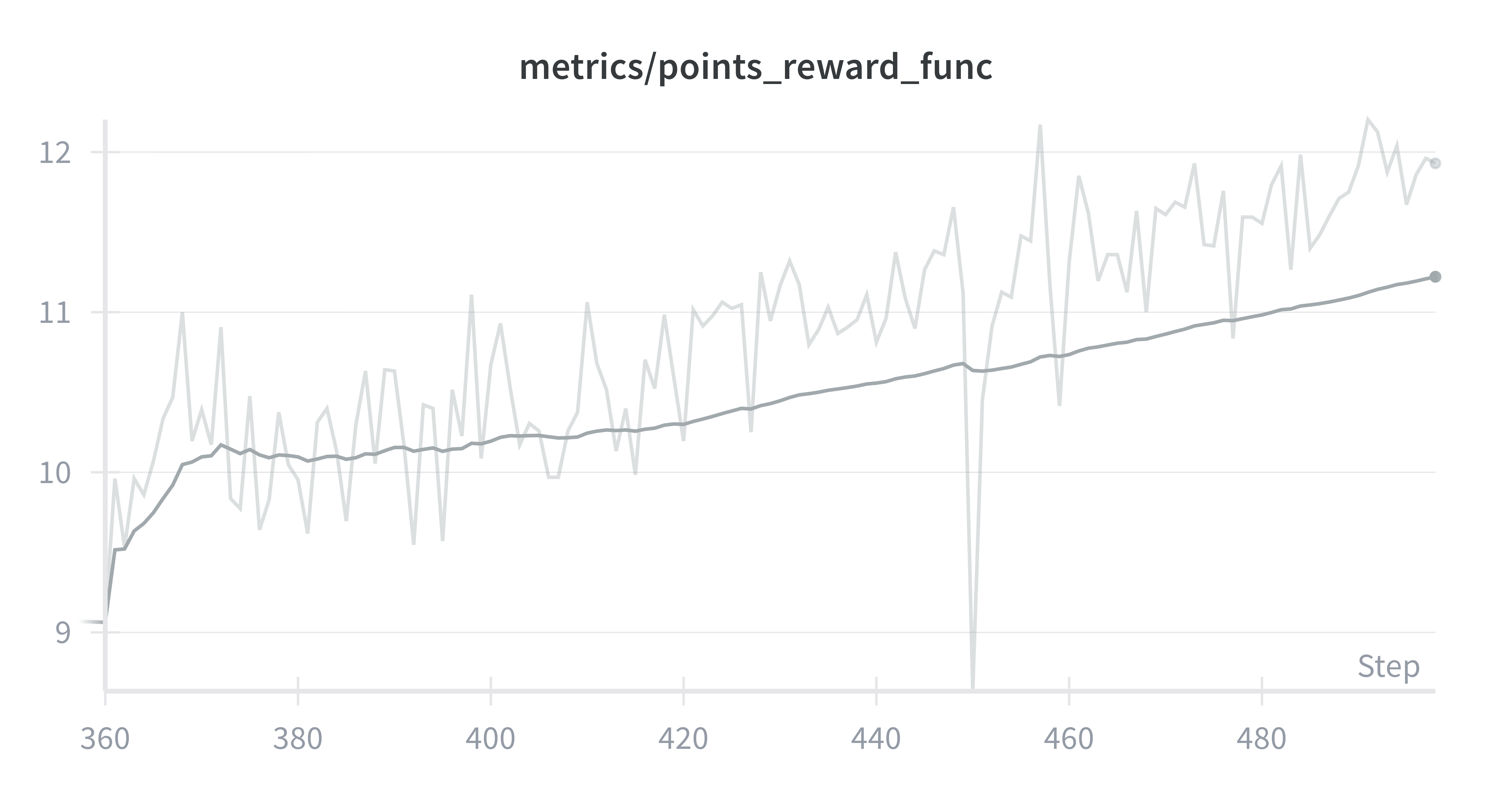

Following the same logic as the training runs above but with much more leeway for larger batch-sizes and longer runs, a Qwen/Qwen3-235B-A22B-Instruct-2507 model reached a mean score of nearly 10 points with no signs of stopping at ~350 steps.

Although this is not much more than the mean score reached in the previous run, it already places the model at the level of Gemini 3 Pro on the leaderboard, with a much more stable run and a much lower budget.

Leveraging the platform’s checkpointing functionality, I extended the run until it reached 500 steps, and a mean score of ~12.2, placing it near the top of the leaderboard.

Tiny Hanabi

Though it’s clear scaling-up the RL training runs would saturate the environment, I wanted to prove the hypothesis much faster and much more cheaply, given that this work is oriented towards informing better abstractions for multi-agent designs, and not so much to saturate the Hanabi env itself.

To do this, I implemented a tiny Hanabi, or, rather, I modified the environment to accept for different configurations of games in terms of colors, ranks and hand-sizes, and finally settled on a specific config with 3 colors, 3 ranks, and a hand-size of 2 for each player. Such config meant shrinking the game’s state-space considerably2, allowing for smaller training runs.

Also, to go one step further in the simplification, I went back to the XML-version of the game (no tool-calling) that meant it was easier to work with the smaller 0.6B and 1.7B non-instruct versions of Qwen3 again. Such environment can be found here.

Synthetic data and SFT warm-up

This time the synthetic data generation was done in two steps. First, as with the previous effort, a first dataset was generated using Grok4-fast. Unlike the previous dataset, this is composed of either perfect or near-perfect scores (for tiny Hanabi).

It only contains ~600 rollouts (i.e. 300 games, with 2 rollouts per game, one per player). In order to have a better coverage and higher-quality dataset a second dataset was generated in an expert-iteration loop. That is, I first ran a Qwen3-4B through SFT+RL, and then used the trained model to generate the second set of more thorough rollouts.

To keep things short, I will show the results of the training runs that used this second dataset. To give perspective, a Qwen3 1.7B model SFT’d on this data for 20 steps already managed to achieve a score of ~3.1, about 50% of the total tiny Hanabi score, unlike the case for standard Hanabi where the SFT only bumped the score to about 12% of the total.

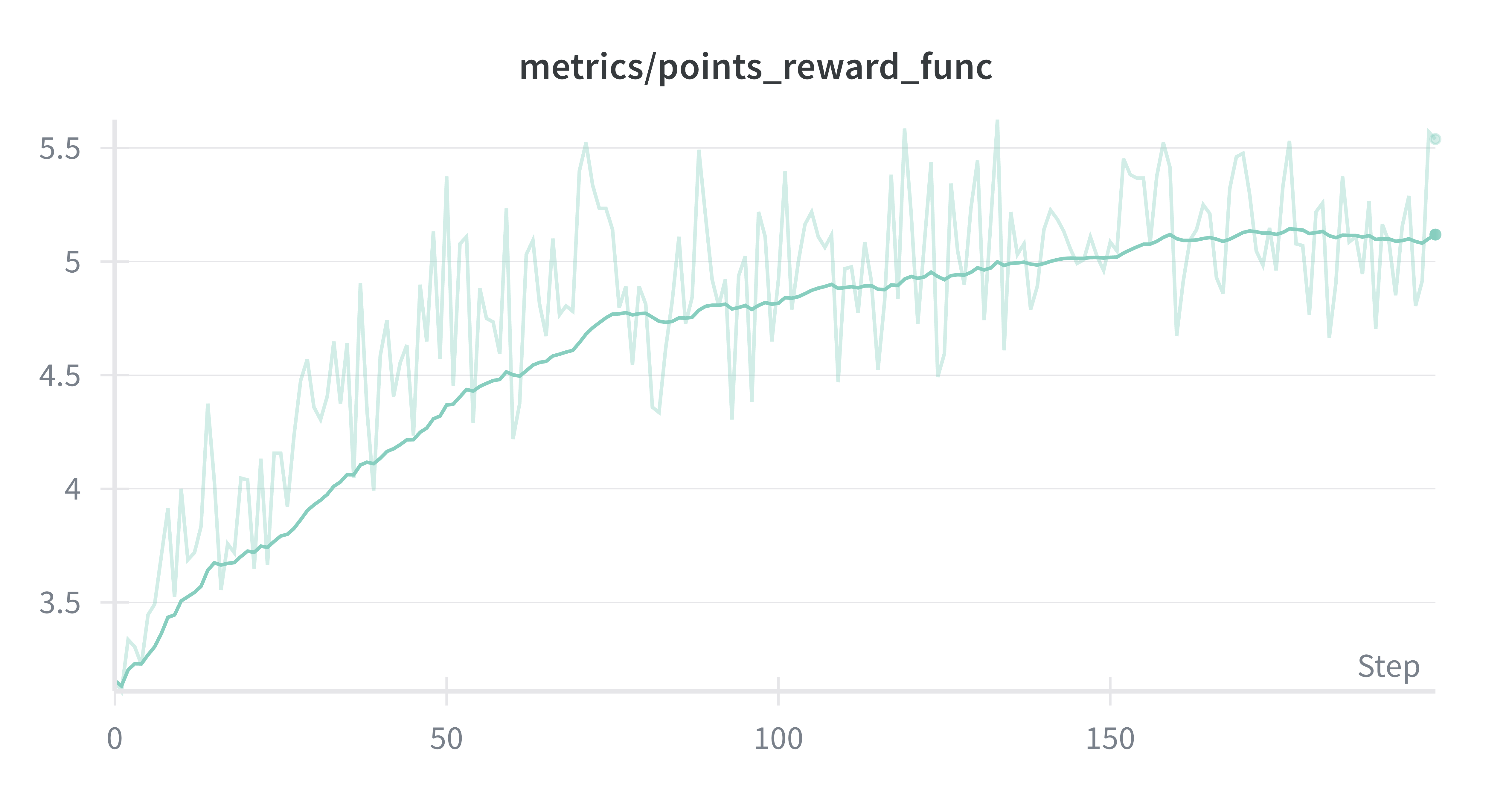

RL

An RL run over this SFT’d baseline quickly raises the model to top, reaching mean scores of 5.5 (out of 6); that is, 91% of the total score.

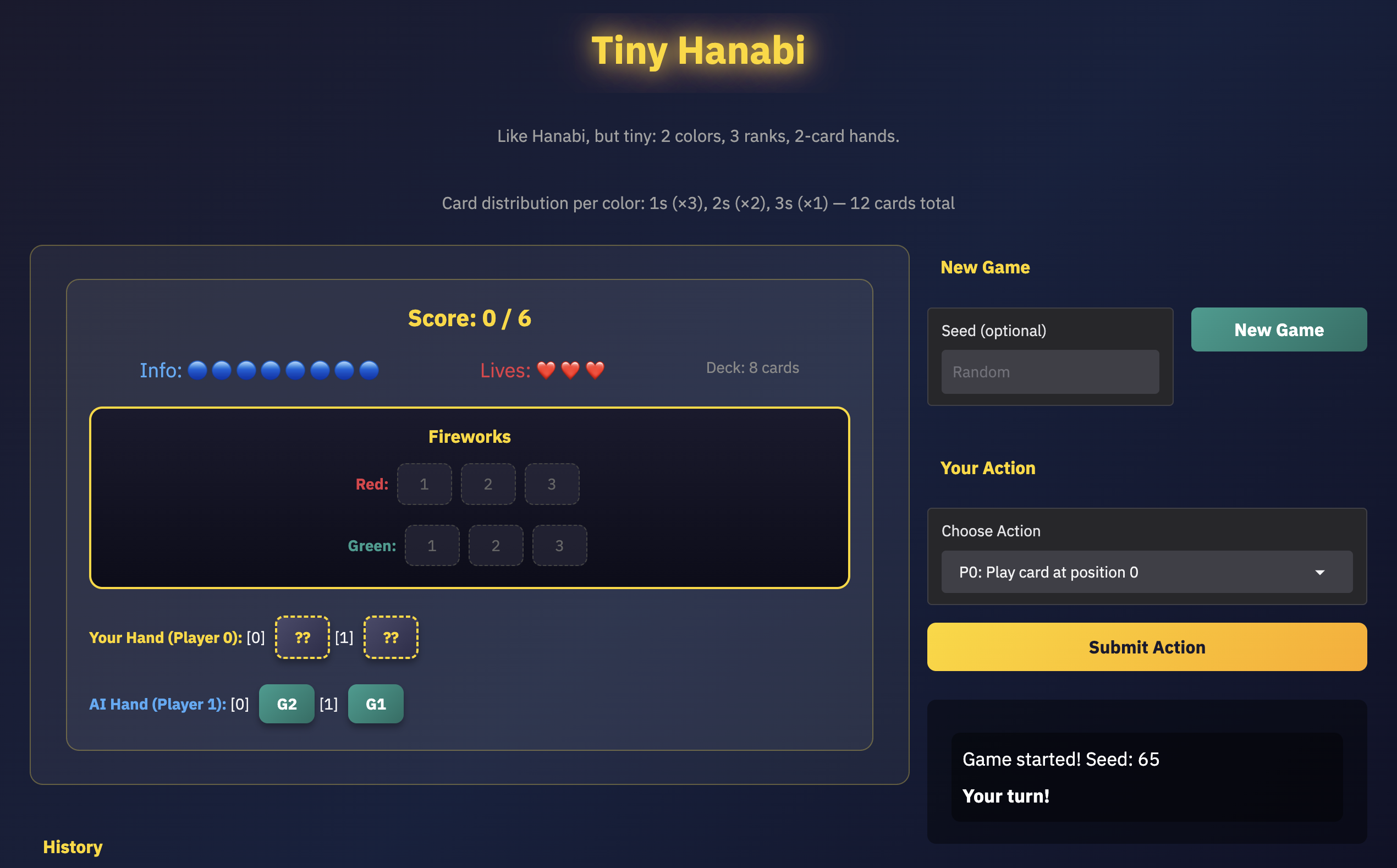

Demo

I vibe-coded a simple demo space where it’s possible to play tiny Hanabi with the trained model:

You will notice two things:

- It is still relatively hard to get a perfect score, even on tiny Hanabi.

- The trained model has its own quirks. As expected, it developed its own communication conventions! For instance, I noticed it will generally respond to color hints as indications that a card is playable. This is both good and bad: it’s probably a result of self-play. It learnt to play certain strategies and get high scores but does not adapt to other types of play.

Multi-Agent Designs

Multi-agent setups are obviously not limited to the kind of case that is covered in the previous experiments. Among many other considerations, a proper taxonomy would have to include things like:

- Reward structure - whether agents cooperate fully, like in Hanabi, or interact in a partial or full competitive environment.

- Information - do agents observe the state of the game fully, or is it only partially observable, like in the case of Hanabi.

- Training setup - are agents trained jointly, with the same policy (i.e. self-play) like in Hanabi, or are there multiple-policies (potentially one per-agent)?

- Communication - can agents communicate, and if so, how?

In this work, the scope is limited to a fairly small space of potential interactions, but even with this in mind, some extensions over verifiers’ abstractions can already be put in place. The Hanabi experiments above relied on pure joint-policy self-play with a shared reward, the simplest possible multi-agent setup. But the abstractions proposed here are designed to support two training modes: single-policy training (shared weights, with per-agent advantage estimation) and per-agent LoRA training (separate adapters on a shared base model). They also extend the reward system to support heterogeneous, per-agent payoffs.

A PR with the proposed changes can be found here, with corresponding training infrastructure changes in prime-rl. I will briefly explain the most important features.

Agent class

One of the most evidently awkward pieces of code in the original Hanabi implementation was the Player class. This class was needed to abstract away the logic of what each agent does, but should ideally be a shared artifact in the verifiers library.

In the changes proposed, the Agent class is a dataclass that representationally isolates each agent in a multi-agent env. It contains an identifier, a system prompt (potentially role-specific), and an is_trainable flag. This last one turned out to be more useful than I initially expected: when one agent fails to produce a valid action, only the offending agent receives a penalty gradient, while the “innocent” agent is temporarily frozen from training for that sample. This prevents one agent’s format failures from corrupting the other’s learning signal.

Protocol

The other essential piece is the Protocol class, which defines how the multiple agents interact in the environment. It specifies turn order and agent interaction patterns, separate from the task/environment logic. This allows the same protocol (e.g., round-robin) to be reused across different tasks.

MultiAgentEnv

Finally, the MultiAgentEnv extends MultiTurnEnv to integrate the Agent and the Protocol into the class that manages the turn-order logic, environment logic, and handling of each agent’s trajectories.

MultiAgentRewardFunc

One significant departure from the Hanabi implementation is the introduction of MultiAgentRewardFunc. Hanabi’s common payoff meant a single scalar reward sufficed: every agent got the same number. But the moment you step into games with heterogeneous payoffs, you need per-agent rewards. A MultiAgentRewardFunc returns a dict[str, float] mapping agent IDs to their individual rewards. On the Rubric side, this means computing per-agent advantages: the baseline for each agent is computed over rollouts where that agent played the same role, rather than a global average across all agents. This matters because in asymmetric games the different roles can have fundamentally different reward scales, and a global baseline would distort gradients for everyone.

Per-agent LoRA

All the experiments up to this point use a single shared policy, where the same weights control every agent. This is self-play in the strictest sense. But some games have roles so different that a shared policy can’t reasonably play both sides well. To support this, the prime-rl changes allow training with separate LoRA adapters per agent, all sharing the same base model. Each agent’s trajectories are routed to its own adapter during training, and policy updates load each adapter independently. This is much more memory-efficient than running separate full models, while still allowing each agent to specialize.

Refactored Hanabi

With the proposed changes above, the implementation of the Hanabi enviornment can be found here and extends the MultiAgentEnv with just some key lines, like the following:

for i in range(num_players):

agent = vf.Agent(

id=f"player_{i}",

system_prompt=generate_system_prompt(

self.config, player_id=i, num_players=num_players, thinking=thinking

),

is_trainable=True,

)

self.register_agent(agent)

where Agent instances are defined, and:

super().__init__(

dataset=train_dataset,

eval_dataset=eval_dataset,

max_turns=max_turns * num_players, # scale by number of players

protocol=vf.RoundRobinProtocol(agent_ids),

**kwargs,

)

where the MultiAgentEnv is instantiated with e RounRobinProtocol.

The rest of the environment logic is defined in the method on_turn_complete which lives in MultiAgentEnv and is defined to update the state of the environment after an agent’s actions.

Signaling and Coordination

To put the new abstractions to the test, I decided to implement two more environments, tightly related to Hanabi’s core dynamics: a Lewis Signaling Game and a pure Coordination Game. These two simple environments serve two purposes:

- disentangle some of the main elements of Hanabi: coordination and emergent communication

- as proofs/tests for the new proposed changes in verifiers

Lewis signaling games

A Lewis Signaling game is a type of signaling game that features perfect common interest between players, and where their success is determined by their capacity to form communication conventions. Both these features – emergent communication and common payoffs – is shared with Hanabi.

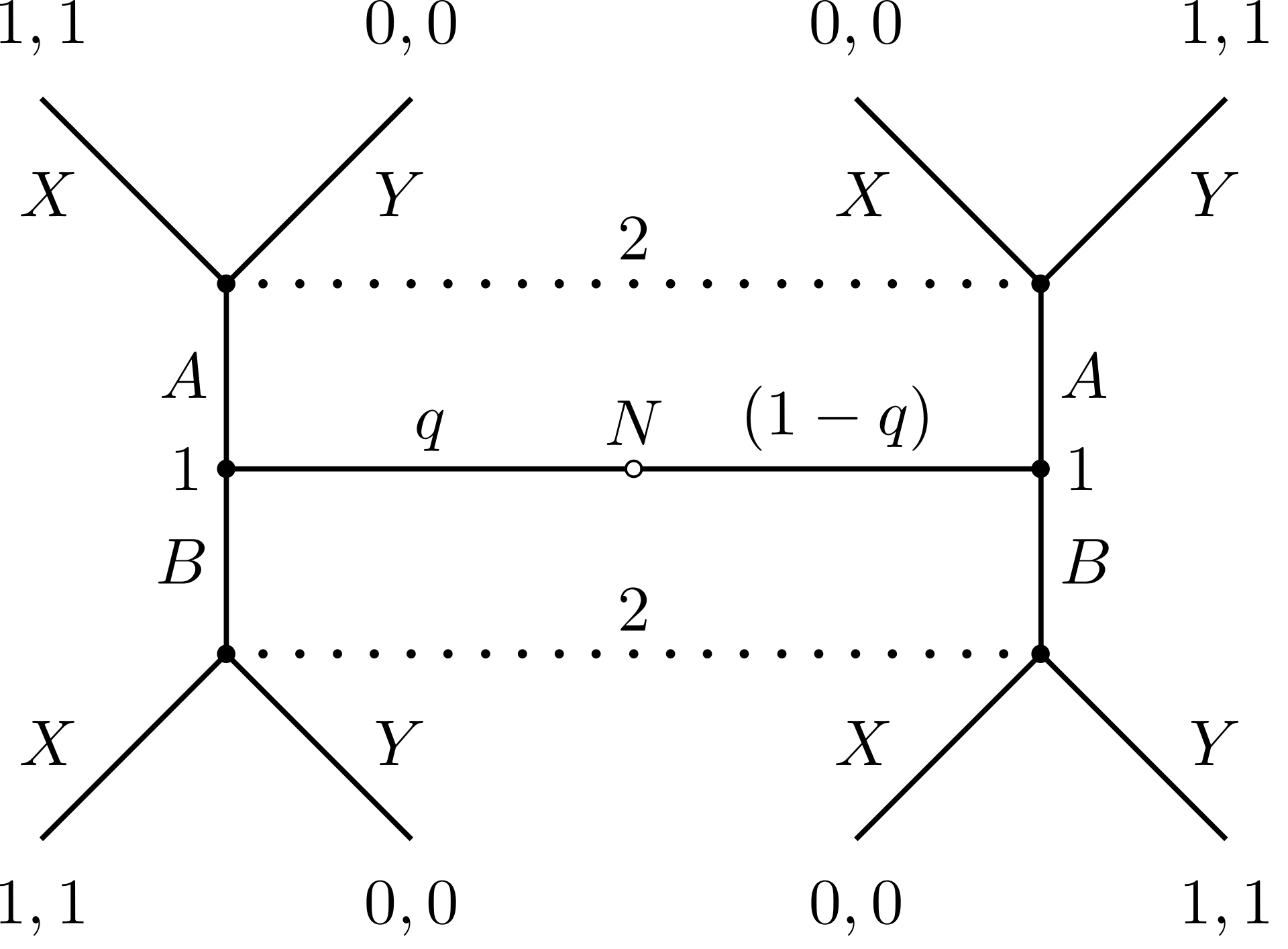

From Wikipedia:

The underlying game has two players, the sender and the receiver. The world can be in any of a number of states and the sender is aware of that state. The sender has at its disposal a fixed set of signals that it can send to the receiver. The receiver can observe the signal sent, but not the state of the world, and must take some action. For each state, there is a unique correct action and both the sender and receiver prefer that the receiver take the correct action in every state.

The extensive form representation of the game is the following, which illustrates the scenarios of common-payoff:

In my implementation, the “state” of the world is a (real) word (e.g. “apple”, “horse”). The sender sees this and a set of “distractors” from the same set of real words. The sender can pick a message from a vocabulary of alien words, meaningless strings like “snorf” or “blun”. The receiver then sees the real words (target and distractors) and must pick one based on the message. If it picks the target then both get a reward of 1, otherwise they get 0.

What is interesting about this environment is that no model – no matter how good – could get a high reward on this environment. There’s no semantic relation at all between the vocabulary of real words and the vocabulary of alien words. Success depends entirely on the ability for the models to learn a way to communicate about the state of the world through iterative experience; that is, forming a mapping between real and fake words.

It is also very easy for models to get quite good at this task. In the default setting, of 32 words, a quick RL run yields the following reward curve:

The following is the mapping3 learnt by the model during such training:

============================================================

LEARNED MAPPING SUMMARY

============================================================

Target Alien Word Count Confidence

------------------------------------------------------------

apple plax 6 60%

banana frag 6 60%

cherry frag 6 60%

grape frag 10 100%

lemon frag 10 100%

mango munt 10 100%

orange zorp 10 100%

peach frag 10 100%

book hask 5 50%

chair blun 8 80%

table plax 10 100%

lamp plax 10 100%

clock hask 10 100%

mirror glont 10 100%

window glont 7 70%

door blun 8 80%

bird frin 6 60%

cat blun 9 90%

dog blun 10 100%

fish fump 8 80%

horse werg 10 100%

mouse blun 10 100%

rabbit blun 7 70%

tiger jund 10 100%

cloud glont 6 60%

river gwim 9 90%

mountain munt 10 100%

forest glont 10 100%

ocean glont 4 40%

desert dask 10 100%

island yolt 10 100%

valley yolt 10 100%

============================================================

COLLISION ANALYSIS

============================================================

Alien words mapping to multiple targets:

blun: chair, door, cat, dog, mouse, rabbit

frag: banana, cherry, grape, lemon, peach

glont: mirror, window, cloud, forest, ocean

hask: book, clock

munt: mango, mountain

plax: apple, table, lamp

yolt: island, valley

Unused alien words (18): blick, blorf, criv, drix, frub, grix, holt, jask, kreb, kwist, quib, snorf, spax, threp, trel, vosh, yump, zang

Pure-coordination games

Coordination is also a core feature in Hanabi. In game theory, a coordination game is where players get higher payoffs when they select the same action as other players. The specific kinds of coordination games that have common-payoffs are called pure coordination games, and have the following shape:

| B1 | B2 | |

|---|---|---|

| A1 | a, a | b, b |

| A2 | b, b | a, a |

Again, in this kind of environment, there’s nothing that can make models any good at coordinating if there’s absolutely no correlation between their actions. In the implemented environment their actions are random 4-character letter strings with no inherent meaning so the key challenge is that actions have no prior focal points. The agents are faced with these actions:

afqo, nzjo, usie, fpva, kafn, vqwp, wpus, yicc, qahf, trxc

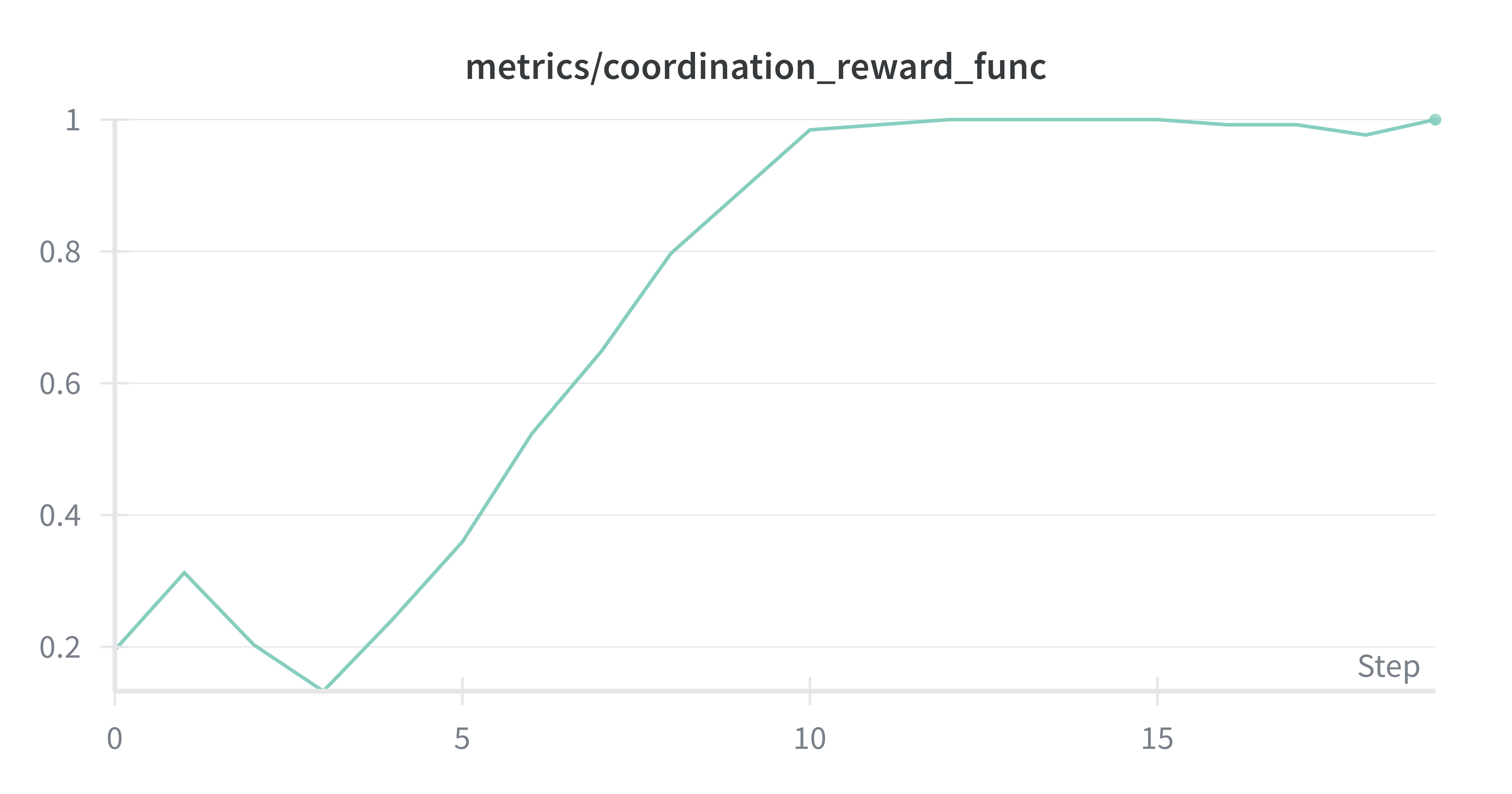

Models very quickly attain perfect rewards.

Out of those, the model converged, for no particular reason, on fpva. Of course, this is not surprising, the first instance that, by chance, coordinates the players will be reinforced until it is the default option by both players.

Beyond Cooperation

The signaling and coordination experiments above, like Hanabi, are common-payoff games. Every agent gets the same reward, so there’s no tension between individual and collective interest. But most interesting multi-agent interactions aren’t like that. To stress-test the proposed abstractions, I implemented four classic game-theory environments4, each introducing a new complication: heterogeneous rewards, asymmetric roles, partial information, and multi-policy training.

Prisoner’s Dilemma

The Prisoner’s Dilemma (environment) is the canonical example of tension between individual rewards and collective wellfare. Two players simultaneously choose an action. The payoff matrix:

| A | B | |

|---|---|---|

| A | 3, 3 | 0, 5 |

| B | 5, 0 | 1, 1 |

Action B (defection) is the dominant strategy: it’s better regardless of what the opponent does. The unique Nash equilibrium is mutual defection at (1, 1), even though mutual cooperation at (3, 3) would be better for both.

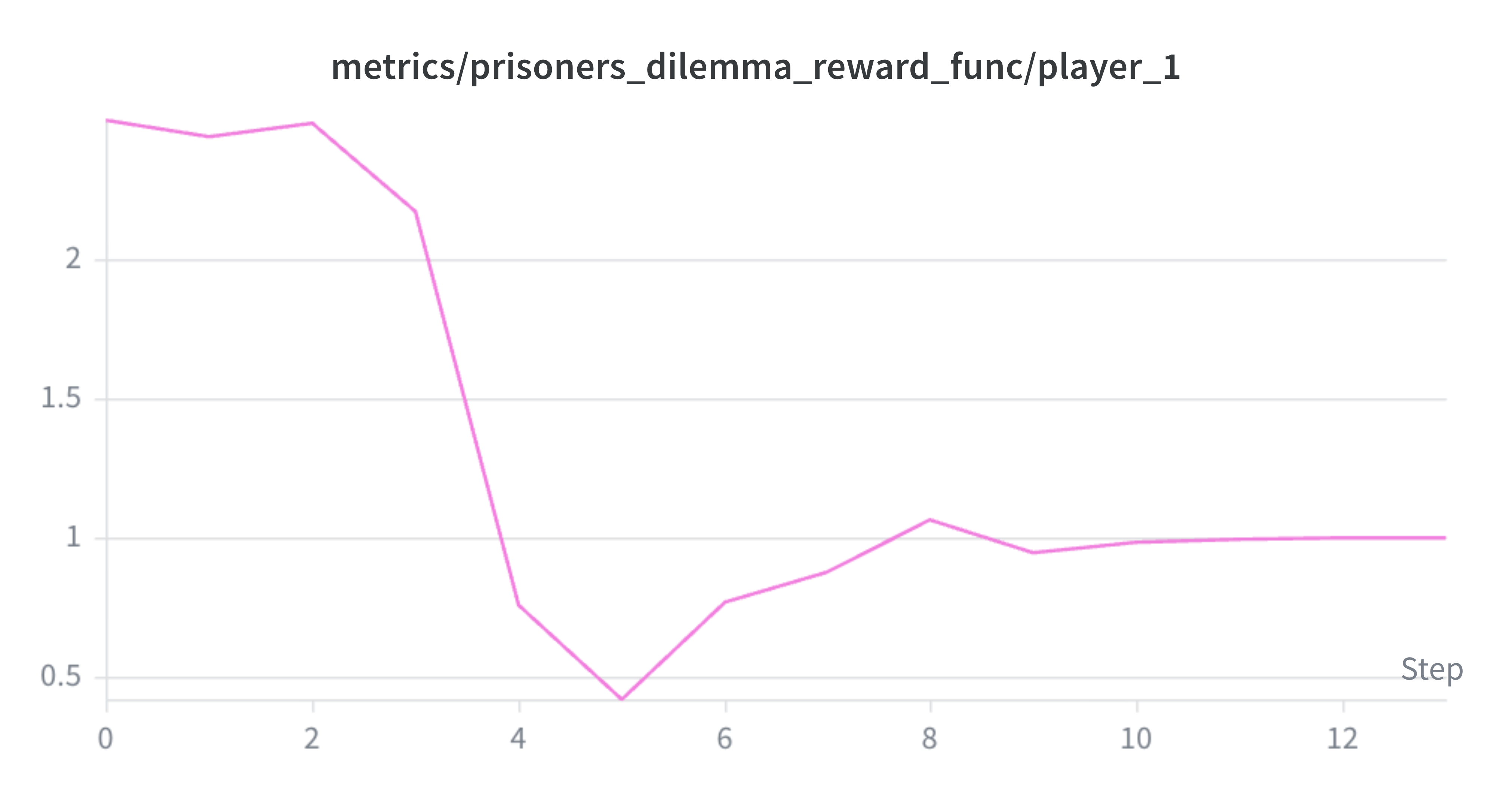

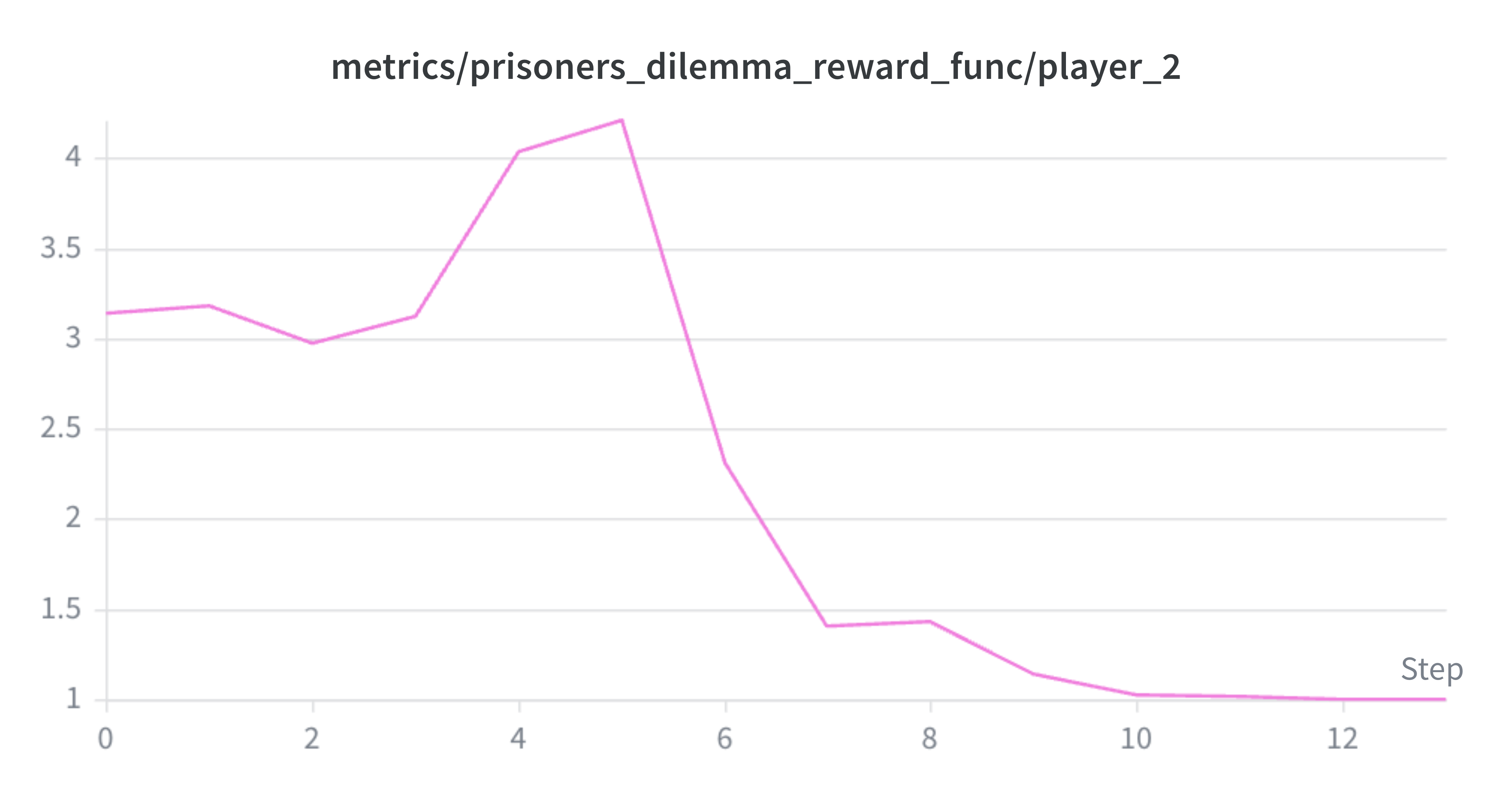

This is the first environment where the common-payoff assumption breaks and MultiAgentRewardFunc comes into play: each agent gets their own payoff depending on the joint action. The results from my short experiments are expected:

The per-player reward curves make the dynamics visible. Player 1 starts around ~2.4 (cooperating) and dips to ~0.4 by step 5 — it’s being exploited. Player 2, meanwhile, spikes to ~4.2 over the same period: it learned to defect while the other was still cooperating, collecting the temptation payoff. By step 10, player 1 catches up and both converge to 1.0, the Nash equilibrium. No cooperation survives the gradient.

Stag Hunt

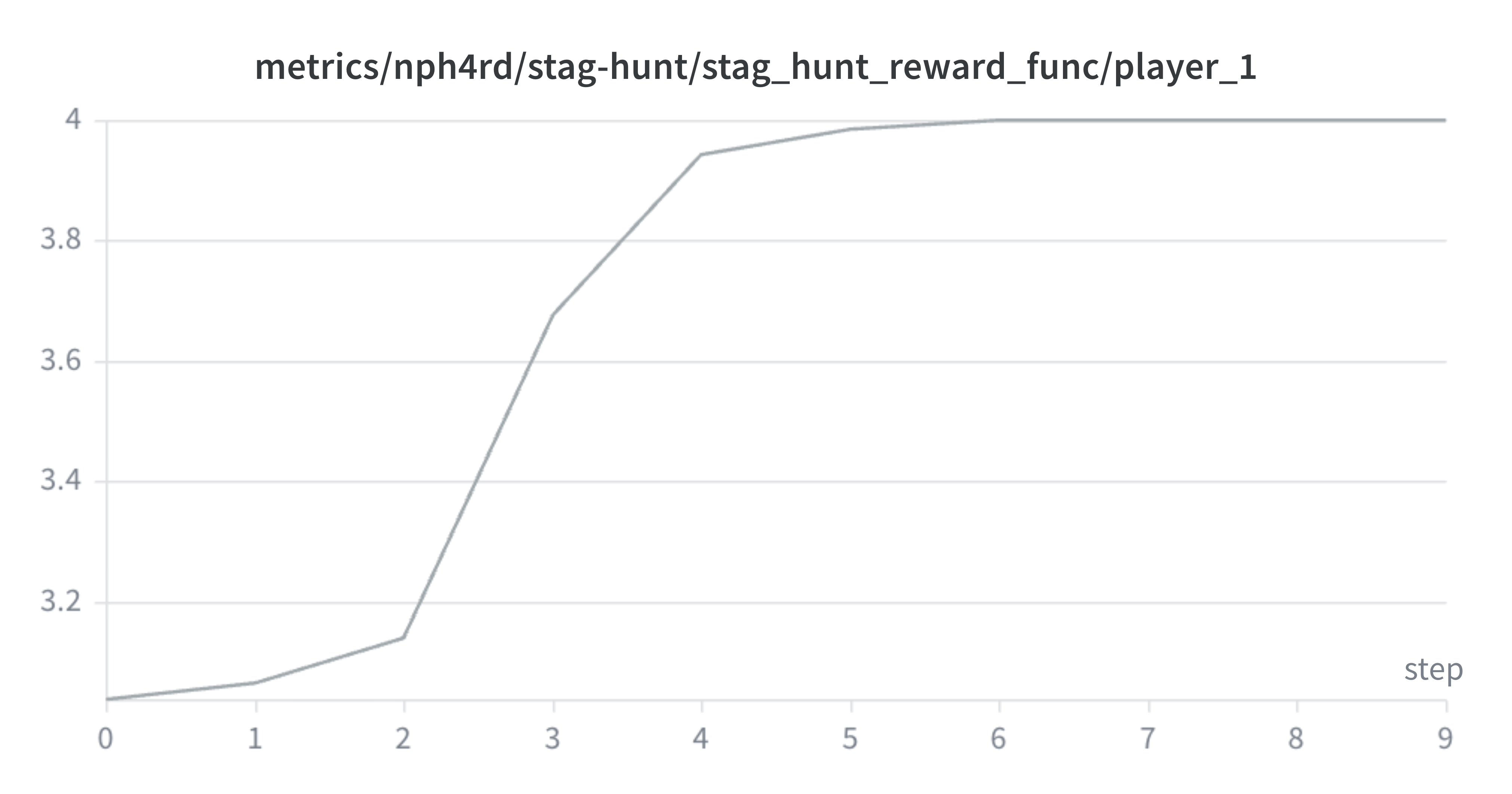

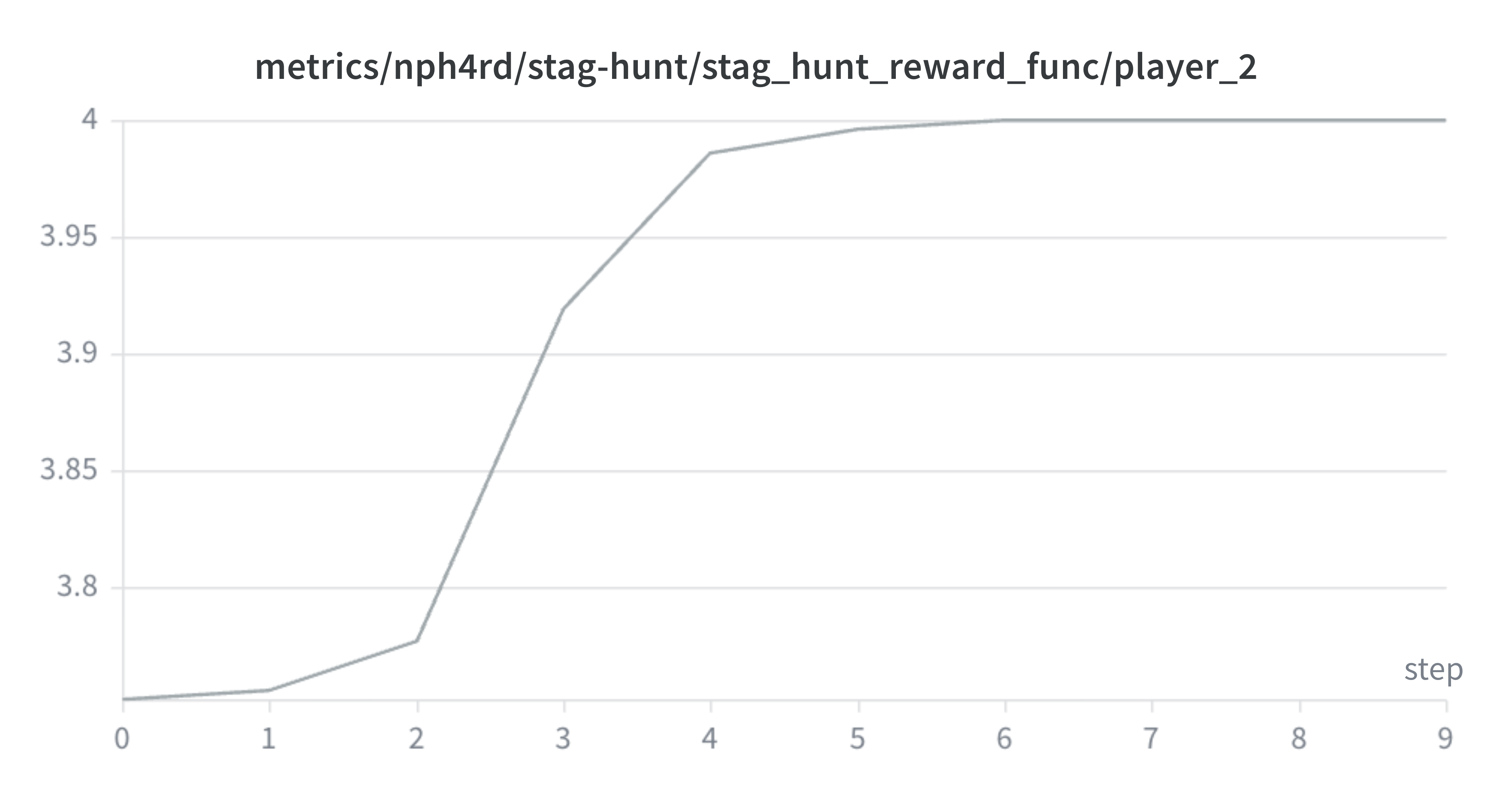

The Stag Hunt (environment) is a coordination game where two players simultaneously choose to hunt stag or hare. The payoff matrix:

| Stag | Hare | |

|---|---|---|

| Stag | 4, 4 | 0, 3 |

| Hare | 3, 0 | 2, 2 |

Unlike PD, the Stag Hunt has two Nash equilibria: both hunt stag (4, 4), the payoff-dominant one, and both hunt hare (2, 2), the risk-dominant one. Hunting stag is better if both players cooperate, but hunting hare is safe regardless of what the other does. The question is whether agents converge to the Pareto-optimal outcome that requires mutual trust, or settle for the safe, suboptimal one.

Nature and Appearance of Deer - from the Wikipedia entry, just because it's cool.

Like PD, the environment uses MultiAgentRewardFunc for per-agent payoffs.

Both players converge to the payoff-dominant equilibrium (4.0, 4.0) within about 5 steps. The curves are smooth and monotonic, starting around ~3.0 and rising steadily with no dips or exploitation phases. This is the opposite of what happened in PD: mutual trust wins. The starting rewards above 3.0 suggest the model was already leaning toward stag from the beginning (a mix of stag and hare would average lower), and RL just pushed it the rest of the way. The risk-dominant equilibrium (2.0, 2.0) never gets a foothold.

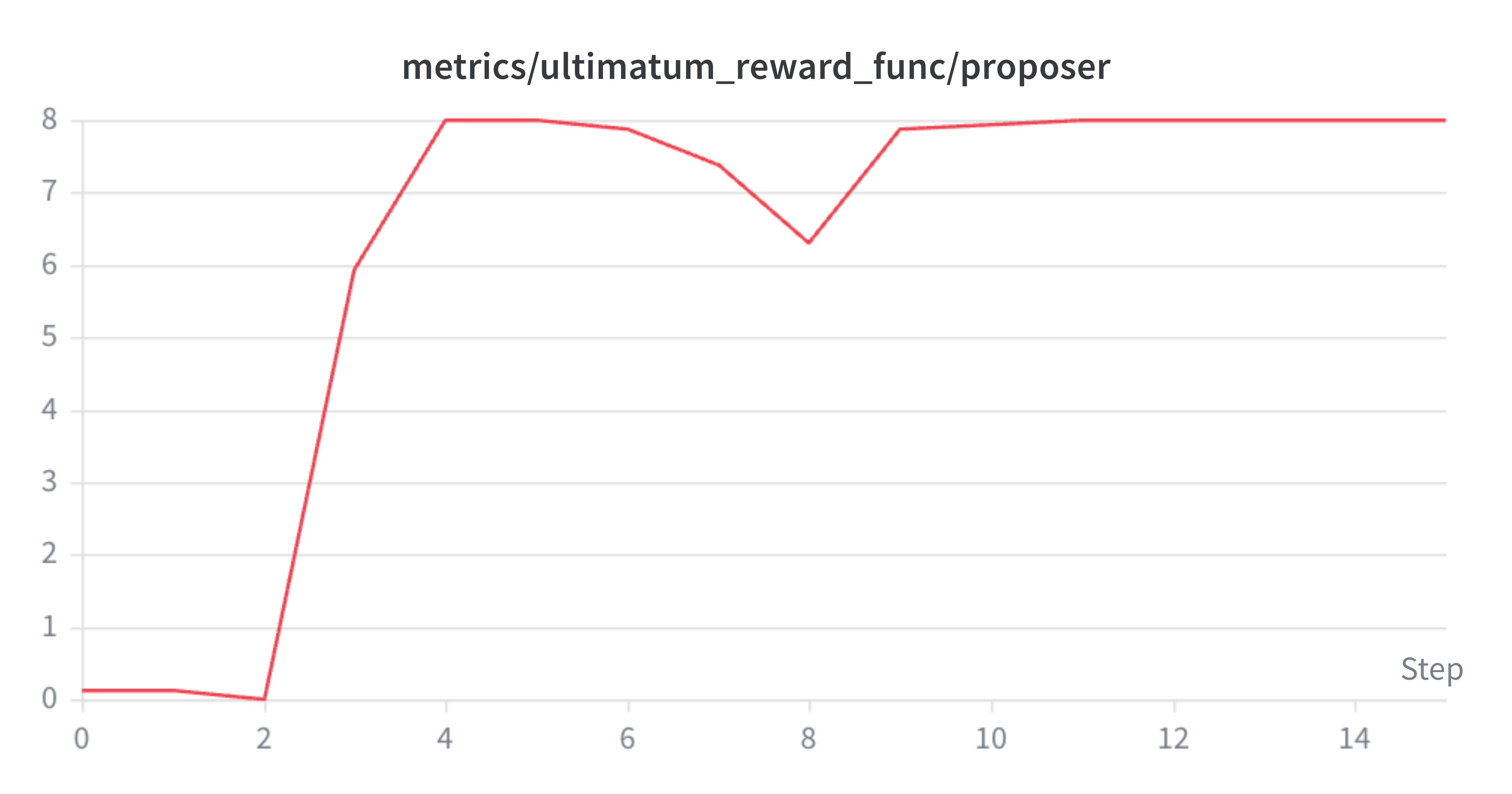

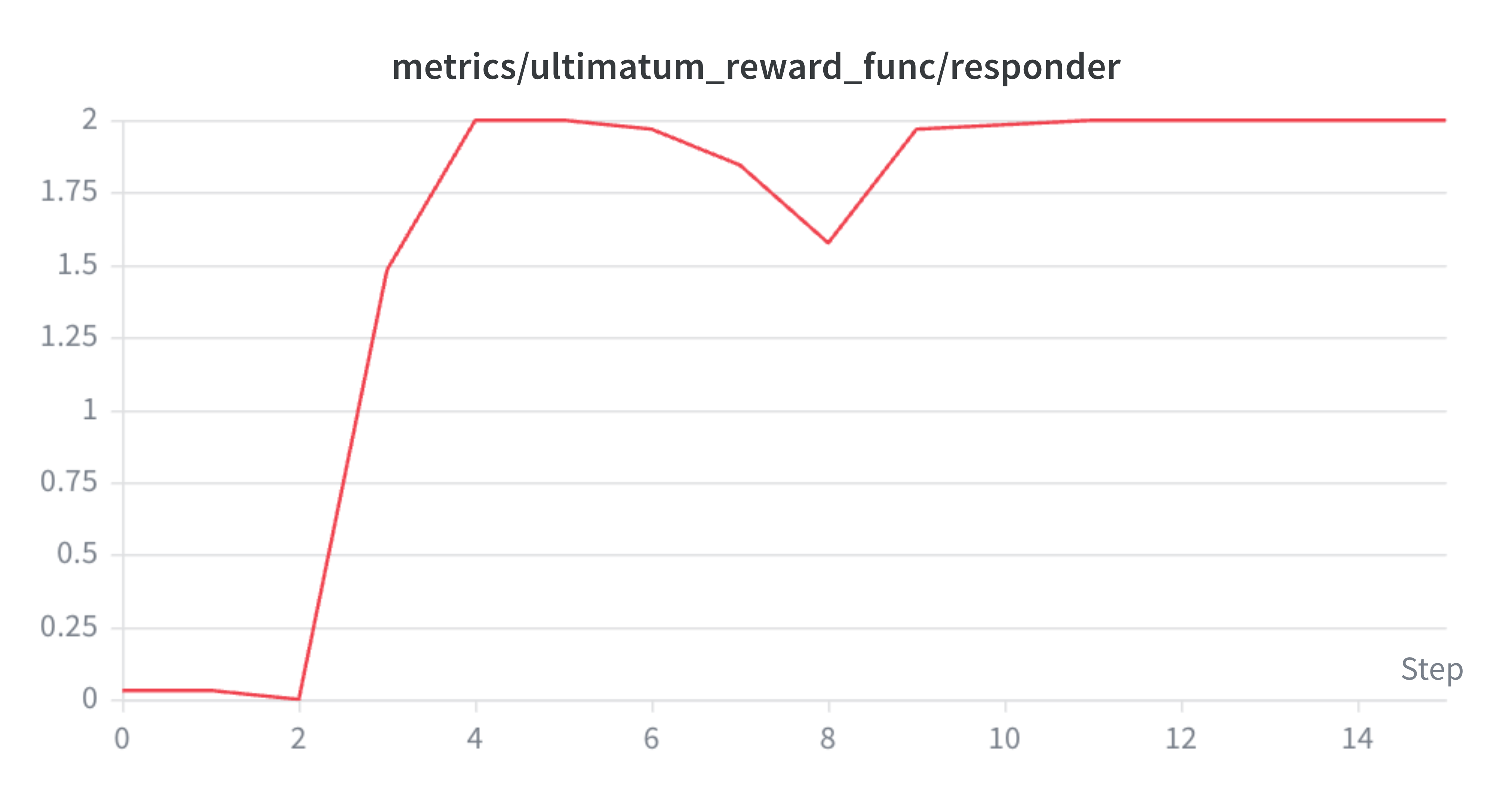

Ultimatum Game

The Ultimatum Game (environment) introduces role asymmetry. One player (the proposer) offers a split of 10 units from three options: low (8/2), fair (5/5), or high (2/8). The other player (the responder) accepts or rejects. If the responder rejects, both get nothing.

Game theory predicts the subgame-perfect equilibrium: the proposer offers the minimum, and the responder accepts, because any payoff beats zero. This is famously at odds with human behavior, where unfair offers are routinely rejected. But an RL-trained model has no spite or fairness norms, just gradients.

Both players start near zero reward with the responder rejecting offers. By step 3, the proposer jumps to ~8.0 and the responder to ~2.0: the model has found the subgame-perfect equilibrium (low offer + accept). There’s a brief dip around step 8, suggesting the responder briefly experimented with rejection again, but it doesn’t last. No fairness emerges.

Beyond the game-theoretic result, this is the first environment with genuinely asymmetric roles. The proposer and responder have different action spaces, different reward scales, and different strategic considerations. Per-agent advantage baselines are essential here: a global baseline would average the proposer’s 8.0 and the responder’s 2.0, distorting gradients for both.

Kuhn Poker

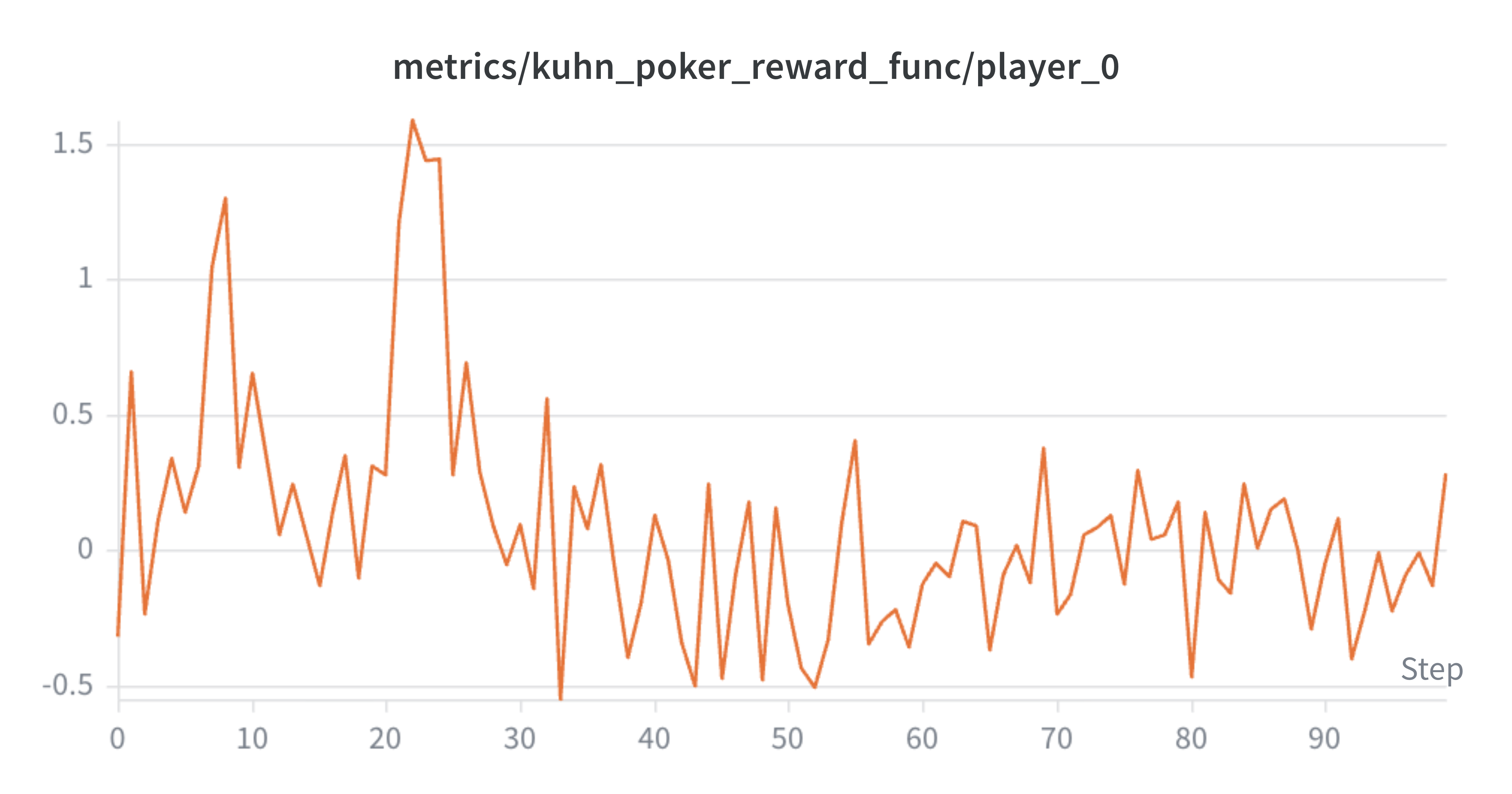

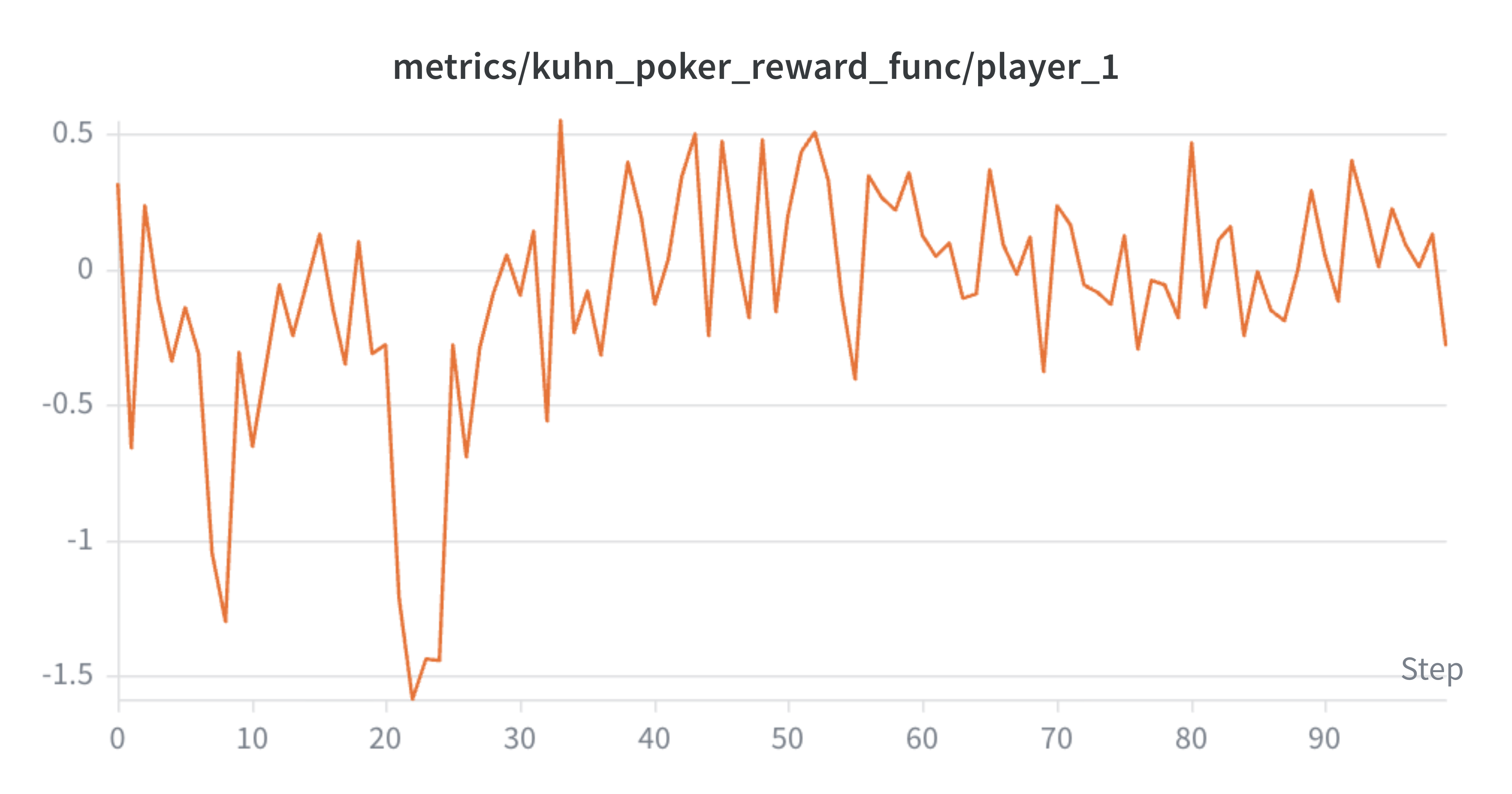

Kuhn Poker (environment) is where things get genuinely hard. It’s a simplified poker game with a 3-card deck (J < Q < K), where each player antes 1 chip and receives one card. Players can check, bet, call, or fold. The game has partial information (you see your card but not your opponent’s) and its Nash equilibrium requires mixed strategies: players must randomize their actions at specific frequencies. For instance, a Jack holder should bluff-bet 33% of the time, and a Queen holder should call bets 33–67% of the time.

Unlike the other environments, Kuhn Poker is presented as-is: the model knows the rules, sees its own card, and reasons about the game in chain-of-thought. This is also the only environment that uses separate LoRA adapters per agent. The reasoning is that in a game with imperfect information, a shared-weight self-play model can effectively “know” both hands through the weights; separate policies force genuine strategic reasoning under uncertainty. The environment also uses a custom KuhnPokerProtocol (turn order depends on game state, not a fixed round-robin) and per-agent heterogeneous rewards (chip-based).

I tracked NE convergence by extracting decisions from the model’s CoT traces at each checkpoint and comparing against the known equilibrium frequencies5. The convergence follows a clear hierarchy:

K converges first (by step 20–30): always bet, always call. J fold-to-bet follows shortly after (by step 30–40). Q checking takes longer (by step 50–60), because the model initially over-values Q as “the second-best card.” Finally, Q mixed call/fold briefly emerges at step 60: the model calls bets 33% of the time, right at the NE boundary.

At step 60 (the peak), 8 out of 9 tracked decision types fell within the NE-optimal range. Chain-of-thought was doing exactly what I hoped: the model would reason itself into calling some of the time and folding other times, depending on the trace. This is the mechanism by which CoT enables mixed strategies: it replaces a single-token action (which GRPO pushes toward a deterministic distribution) with a longer generation where different reasoning paths lead to different conclusions.

But by step 90, Call went extinct. The Q call rate had been falling steadily: 100% → 57% → 33% → 0%. It overshot past the equilibrium and died. Without CoT, action extinction happened even earlier (by step 50–65), so the reasoning traces buy roughly 30–40 extra steps of healthy training, but they don’t fix the underlying problem.

The per-player reward curves are near-perfect mirrors of each other, confirming the zero-sum structure. Player 0 spikes early (up to ~1.5 around step 20–25) while player 1 dips symmetrically to ~-1.5 — one policy is briefly exploiting the other. After step 30, both settle into noisy oscillation around zero, which is what you’d expect near Nash equilibrium: neither player can consistently extract value from the other.

The root cause is a fundamental tension between GRPO and mixed-strategy equilibria. Once folding with Q yields slightly higher average reward than calling (which happens as soon as the call rate drops below 33%), the gradient pushes further toward folding, which reduces the call rate further, which makes folding even better. There’s no restoring force. GRPO’s positive-feedback loop is hostile to the stochastic play that NE requires.

One thing that never emerged across any checkpoint was the Jack bluff. At NE, J should bluff-bet 33% of the time after the opponent checks; this makes King bets credible, since the opponent can’t tell them apart. But the model always concluded “J is the weakest card, betting is risky,” and the bluff never accumulated enough gradient signal to overcome this locally rational heuristic. Bluffing is globally necessary but locally costly, and GRPO can’t bridge that gap6.

Directions

The environments above, from Hanabi’s pure cooperation through the Prisoner’s Dilemma and Ultimatum to Kuhn Poker, show that the proposed abstractions can handle an increasing range of multi-agent interactions. Per-agent rewards, asymmetric roles, and separate LoRA policies all work. But the experiments also make clear where the current approach hits its limits.

Entropy and mixed strategies

The Kuhn Poker results point to a fundamental tension between GRPO and games that require stochastic play. Entropy regularization, minimum sampling temperatures, or population-based training might help sustain mixed strategies. In my view, this is the most important problem: there might be fundamental limitations with the underlying algorithm (or models), that prevents agents to reach mixed strategies, which are common in many environments.

Scaling up

The context-length explosion observed in the standard Hanabi runs is a practical bottleneck but not a fundamental one. Longer runs with larger batch sizes would push performance further. The hosted training results already hint at this: the 235B MoE model reached ~12 points with a stable run and no signs of plateauing.

Cross-play and population diversity

The conventions that emerge in self-play are effective but arbitrary and brittle. This is noticeable in the Tiny Hanabi demo. Training against diverse opponents, or maintaining a population of policies, could lead to stronger and more generalizable strategies. This connects naturally to the entropy problem: a diverse population might be exactly what’s needed to sustain the kind of stochastic play that single-policy GRPO kills. Multi-policy training via LoRA adapters already lay out the tooling for this, so leveraging it could be a great excercise.

Final word

The experiments here, from cooperative environments where RL pushes small models past frontier baselines to competitive games that expose fundamental limitations of current training methods, suggest there is a lot of low-hanging fruit still to be picked, and some genuinely hard problems just behind it. Billy Hoy, for instance, has been building similar abstractions with a slighly different focus (e.g. support for multiple base models per agent, simultaneous moves, and hierarchical environment spawning), so I think there are many exciting things coming! I hope this work turns out to be useful to him and others exploring this space, and I would like to thank the Prime Intellect team for giving me the opportunity to do so and for consistently going for the stag! <3

@misc{nph4rd2026hanabi,

author = {Marquez, Arturo},

title = {HANABI},

year = {2026},

url = {https://nph4rd.github.io/2026/02/23/hanabi.html},

note = {Blog post, nph4rd.github.io}

}

-

Concurrent to this work, Ramesh et al. also fine-tuned Qwen3-4B on Hanabi using GRPO/prime-rl – their approach uses a pre-collected dataset of move-level value annotations from o3 rather than online self-play. They have a much more thorough benchmarking effort (17 models) and interesting results showing Hanabi RL training transfers to out-of-domain tasks. ↩

-

I haven’t done the math here myself. Opus 4.6 seems to claim that it’s a state-space reduction of roughly 17+ orders of magnitude, with the following comparison table:

Metric Standard (5c/5r/h5) Tiny (2c/3r/h2) Reduction Card types 25 6 ~4x Deck size 50 12 ~4x Action space 20 9 ~2x State space ~10³⁰⁺ ~10¹³ ~10¹⁷x smaller In any case, I found this cool website that explains the complexity of games with a citation to this paper, claiming:

-

These are the alien words chosen by the sender when presented with a specific target over 10 trials. ↩

-

Except for Kuhn Poker, none of these environments are presented to the model as their classical selves. The models don’t know they’re playing the Prisoner’s Dilemma or the Ultimatum Game. Actions are masked behind random strings (e.g., “zxkm” and “qvnp” instead of “cooperate” and “defect”), the payoff structure is never disclosed, and there is no framing that could trigger pretraining knowledge about these games. The point is to see whether the correct equilibrium strategies emerge purely from reward signal, without any semantic scaffolding, and, as shown above, they do! ↩

-

Each checkpoint was evaluated on 8 rollouts. Decisions were extracted from model-generated text, classified by card held (J/Q/K) and decision context (opening, after opponent check, facing a bet), and compared against the known mixed-strategy Nash Equilibrium for Kuhn Poker. ↩

-

Work by Liu et al. (SPIRAL) trains Qwen3-4B-Base on Kuhn Poker via self-play with full-parameter updates and a shared policy. They don’t measure Nash equilibrium convergence, but find that Kuhn Poker training alone improves math reasoning by 8.6% and general reasoning by 8.4% — outperforming SFT on 25,000 expert trajectories. Their key insight is that the reasoning patterns emerging during training (case-by-case analysis, expected value calculation) transfer to out-of-domain benchmarks via the CoT traces, regardless of whether the agent reaches equilibrium play. This suggests the cognitive byproducts of the same training dynamics whose game-theoretic failure I document here are themselves valuable. They also independently arrive at per-role advantage baselines (their “Role-conditioned Advantage Estimation”), without which they observe thinking collapse — CoT traces shrink to near-zero length after ~200 steps. An interesting open question is whether the action extinction I observe (Q-call dying, J-bluff never emerging) also degrades the quality of transferred reasoning over longer runs, or whether the useful patterns are harvested early enough that it doesn’t matter. ↩